AMD 16-Core Ryzen 9 3950X: Up to 4.7 GHz, 105W, Coming September

by Dr. Ian Cutress on June 11, 2019 6:00 PM EST- Posted in

- CPUs

- AMD

- Ryzen

- Zen 2

- Ryzen 3000

- Ryzen 3rd Gen

- Ryzen 9

- 3950X

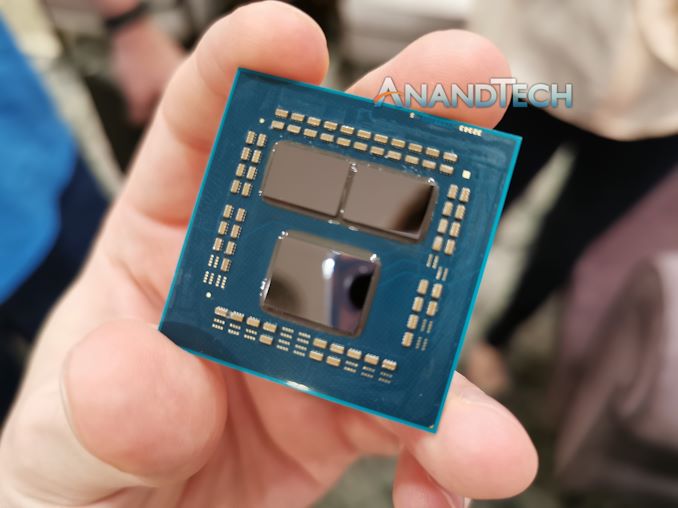

One of the questions that was left over from AMD’s Computex reveal of the new Ryzen 3000 family was why a 16-core version of the dual-chiplet Matisse design was not announced. Today, AMD is announcing its first 16 core CPU into the Ryzen 9 family. AMD stated that they’re not interested in the back and forth with its competition about slowly moving the leading edge in consumer computing – they want to launch the best they have to offer as soon as possible, and the 16-core is part of that strategy.

The new Ryzen 9 3950X will top the stack of new Zen 2 based AMD consumer processors, and is built for the AM4 socket along with the range of X570 motherboards. It will have 16 cores with simultaneous multi-threading, enabling 32 threads, with a base frequency of 3.5 GHz and a turbo frequency of 4.7 GHz. All of this will be provided in a 105W TDP.

| AMD 'Matisse' Ryzen 3000 Series CPUs | |||||||||||

| AnandTech | Cores Threads |

Base Freq |

Boost Freq |

L2 Cache |

L3 Cache |

PCIe 4.0 |

DDR4 | TDP | Price (SEP) |

||

| Ryzen 9 | 3950X | 16C | 32T | 3.5 | 4.7 | 8 MB | 64 MB | 16+4+4 | ? | 105W | $749 |

| Ryzen 9 | 3900X | 12C | 24T | 3.8 | 4.6 | 6 MB | 64 MB | 16+4+4 | ? | 105W | $499 |

| Ryzen 7 | 3800X | 8C | 16T | 3.9 | 4.5 | 4 MB | 32 MB | 16+4+4 | ? | 105W | $399 |

| Ryzen 7 | 3700X | 8C | 16T | 3.6 | 4.4 | 4 MB | 32 MB | 16+4+4 | ? | 65W | $329 |

| Ryzen 5 | 3600X | 6C | 12T | 3.8 | 4.4 | 3 MB | 32 MB | 16+4+4 | ? | 95W | $249 |

| Ryzen 5 | 3600 | 6C | 12T | 3.6 | 4.2 | 3 MB | 32 MB | 16+4+4 | ? | 65W | $199 |

AMD has said that the processor will be coming in September 2019, about two months after the initial Ryzen 3rd Gen processors, due to extra validation requirements. The chip uses two of the Zen 2 eight-core chiplets, paired with an IO die that provides 24 total PCIe 4.0 lanes. By using the AM4 socket, AMD recommends pairing the Ryzen 9 3950X with one of the new X570 motherboards launched at Computex.

With regards to performance, AMD is promoting it as a clear single-thread and multi-thread improvement over other 16-core products in the market, particularly those from Intel (namely the 7960X).

There are several questions surrounding this new product, such as reasons for the delay between the initial Ryzen 3000 launch to the 3950X launch, the power distribution of the chiplets based on the frequency and how the clocks will respond to the 105W TDP, how the core-to-core communications will work going across chiplets, and how gaming performance might be affected by the latency differences going to the IO die and then moving off to main memory. All these questions are expected to be answered in due course.

Pricing is set to be announced by AMD at its event at E3 today. We’ll be updating this news post when we know the intended pricing.

Update: $749

Related Reading

- AMD Ryzen 3000 Announced: Five CPUs, 12 Cores for $499, Up to 4.6 GHz, PCIe 4.0, Coming 7/7

- AMD Ryzen 3rd Gen 'Matisse' Coming Mid 2019: Eight Core Zen 2 with PCIe 4.0 on Desktop

- AMD: 3rd Gen Ryzen Threadripper in 2019

- AMD Confirms PCIe 4.0 Not Coming to Older Motherboards (X470, X370, B350, A320)

- ASUS Pro WS X570-Ace: A No-Nonsense All-Black Motherboard with x8/x8/x8

- GIGABYTE Unveils X570 Mini-ITX Motherboard: X570 I Aorus Pro WiFi

- ASRock X570 Aqua: Heaviest AMD Flagship Motherboard Ever (Plus Thunderbolt)

- MSI Unveils the MEG X570 Ace: Black and Gold For AMD 50

172 Comments

View All Comments

xrror - Tuesday, June 11, 2019 - link

kinda makes you wonder how the newer processes are optimized.My guess is everything is geared for mobile/battery life.

Going for raw per (Ghz scaling) isn't the mass market anymore, so all we get are power optimized nodes that don't scale for crap.

The big money isn't in giving John/Julie Doe a faster craptop anymore, it's giving them a phone that they can forget in the car for 3 days while still remembering their facebook dickpics.

yeay humanity =(

mode_13h - Tuesday, June 11, 2019 - link

I think frequency scaling has slowed due to increasing leakage, as feature size continues to shrink - not dickpics. There is plenty of demand for a high-power process - cloud, HPC, self-driving cars, and robotics, to name a few.Oxford Guy - Tuesday, June 11, 2019 - link

"There is plenty of demand for a high-power process"More explanation needed. I know of high-frequency trading wanting the fastest-possible IPC coupled with the fastest-possible clock. Emulation also tends to like this. Outside of these two small cases, though, I am unfamiliar with how things like cloud computing can't use the chiplet approach. The same goes for cars. Robotics is a maybe. It seems that it would benefit the most from improved AI circuitry.

mode_13h - Tuesday, June 11, 2019 - link

There's something ironic about bemoaning the decline of humanity with a post that sort of exemplifies it, I might add.PixyMisa - Tuesday, June 11, 2019 - link

I was hoping it would be cheaper, but on the other hand I do want AMD to make money so they can bring us more shiny toys in the future.PixyMisa - Tuesday, June 11, 2019 - link

Clicked the wrong reply button...psychobriggsy - Tuesday, June 11, 2019 - link

Why? Using chiplets greatly improves yield, and allows die-matching (good + good die = 3950X).Sure, at 5nm maybe a 16C chiplet will make sense, simply because otherwise the chiplets start getting absolutely tiny, but even then there's no real need, a 5nm 8C die will still be around 50mm^2 (allowing for Zen 3 enhancements, more cache, etc).

Oxford Guy - Tuesday, June 11, 2019 - link

The trouble with chiplets is latency. However, considering the bottlenecks elsewhere (like slotted RAM), perhaps it's not a huge problem.Beyond chiplets, though, there is the issue of not getting even close to the reticle limit of process nodes (e.g. Radeon VII). Not only is this good for margin it's bad for performance, including performance-per-watt. Companies like AMD resort to pushing voltage/clock well beyond optimal to get more performance out of an unnecessarily small die.

scineram - Wednesday, June 12, 2019 - link

Nonsense.Oxford Guy - Friday, June 14, 2019 - link

fascinating analysis there