The Impact of Disruptive Technologies on the Professional Storage Market

by Johan De Gelas on August 5, 2013 9:00 AM EST- Posted in

- IT Computing

- SSDs

- Enterprise

- Enterprise SSDs

The Winds of Change

My reason for writing this article is that a wind of change is blowing through the storage market. The success of cloud storage such as Amazon S3 and Syncplicity has opened the way to new methods of archiving, making backups, and even disaster recovery. But the biggest disruptor is of course flash memory, and more specifically PCIe SSDs.

PCIe SSDs are not bandwidth limited by the SATA/SAS wiring and (if implemented well) protocol overhead. As a result, PCIe drives have up to three times as many channels of flash memory. And well-designed PCIe SSDs do not have to carry the burden of RAID controllers and protocols that were architectured for hard drives with completely different characteristics than flash memory. But even if they use a PCIe/SAS bridge, PCIe SSDs offer higher reliability and vastly superior performance than the best enterprise drives. But there is much more going on.

As PCIe SSDs offer large capacities (up to 10TB!) and performance in a very small form factor, they open new markets. It is interesting to see the completely new solutions that are now available, solutions that are much better suited for certain workloads. One example of a workload where traditional SANs fall short is virtual desktops.

Virtual Desktops

Virtual desktops like Xendesktop or VMware View have been promising significant energy and cost savings, but these savings almost never materialize in reality. The energy saving claims made a few years ago were ridiculous; they were based on the assumption that we are still using power hogging desktops. Replace those with thin clients and you magically get massive energy savings.

The reality is that most of the IT professionals already use a 20-30W portable instead of an old 150W desktop, and the extra server load was not helping save energy either. Even if portables were not used, many business desktops today sip small amounts of energy. And if there was any miraculous energy saving, the additional complex storage system would be the final blow. The end result of desktop virtualization is often higher instead of lower energy bills. But perhaps worse is that knowledge workers hated most of the virtual desktops project with a passion. Suddenly several actions that used to complete without any noticeable response time became laggy.

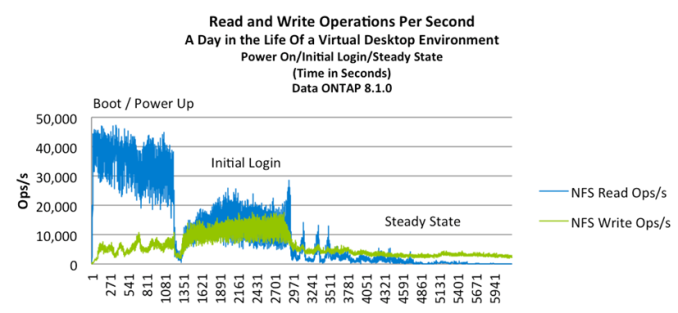

Although there were serious costs savings if your desktop deployment and management was just organized chaos, every organization that replaced PCs with virtual desktops faced the need for huge investments. As lots of people boot up their virtual desktop in the morning, massive amounts of data is written and read in a rather random way: the so called “boot storm”. The solution was to boot up the desktops in a staggered way, tens of minutes before the arrival of the users, and to perform all kinds of special optimizations all over the software stack. But that is hardly more than a band-aid: what about unexpected hot fix patches, or what if people arrive a little bit earlier on occasion?

Data source: NetApp News 2013

Astute readers understand that the administration of virtual desktops is quite a bit more complex than the traditional setup with roaming profiles and saving files on a centralized file server. Only the most recent and high-end SANs could really deal with these specific requirements. Granted, some of the essential storage tasks like backup and archiving are a lot easier once you have a SAN in place… but mostly after you have invested in all kinds of expensive management software. When you start to invest in a complex SAN platform, the costs seem to multiply like rabbits.

In short, although a fast SAN seemed to be an enabler, they were also a deal breaker in the virtual desktop world. They're too slow and/or too expensive, and they're also power hungry.

Several companies feel they have a much better alternative and it is very interesting to see how the Fusion–IO and Intel PCIe SSDs are being turned into innovative and specialized alternatives for the typical SAN solution. Let's discuss a few of these over the next several pages.

60 Comments

View All Comments

jhh - Wednesday, August 7, 2013 - link

And Advanced/SDDC/Chipkill ECC, not the old-fashioned single-bit correct/multiple bit detect. The RAM on the disk controller might be small enough for this not to matter, but not on the system RAM.tuxRoller - Monday, August 5, 2013 - link

Amplidata's dss seems like a better, more forward looking alternative.Sertis - Monday, August 5, 2013 - link

The Amplistore design seems a bit better than ZFS. ZFS has a hash to detect bit rot within the blocks, while this stores FEC coding that can potentially recover the data within that block without calculating it based on parity from the other drives on the stripe and the I/O that involves. It also seems to be a bit smarter on how it distributes data by allowing you to cross between storage devices to provide recovery at the node level while ZFS is really just limited to the current pool. It has various out of band data rebalancing which isn't really present in ZFS. For example, add a second vdev to a zpool when it's 90% full and there really isn't a process to automatically rebalance the data across the two pools as you add more data. The original data stays on that first vdev, and new data basically sits in the second vdev. It seems very interesting, but I certainly can't afford it, I'll stick with raidz2 for my puny little server until something open source comes out with a similar feature set.Seemone - Tuesday, August 6, 2013 - link

Are you aware that with ZFS you can specify the number of replicas each data block should have on a per-filesystem basis? ZFS is indeed not very flexible on pool layout and does not rebalance things (as of now), but there's nothing in the on-disk data structure that prevent this. This means it can be implemented and would be applicable on old pools in a non disruptive way. ZFS, also, is open source, its license is simply not compatible with GPLv2, hence ZFS-On-Linux separate distribution.Brutalizer - Tuesday, August 6, 2013 - link

If you want to rebalance ZFS, you just copy the data back and forth and rebalancing is done. Assume you have data on some ZFS disks in a ZFS raid, and then you add new empty discs, so all data will sit on the old disks. To spread the data evenly to all disks, you need to rebalance the data. Two ways:1) Move all data to another server. And then move back the data to your ZFS raid. Now all data are rebalanced. This requires another server, which is a pain. Instead, do like this:

2) Create a new ZFS filesystem on your raid. This filesystem is spread out on all disks. Move the data to the new ZFS filesystem. Done.

Sertis - Thursday, August 8, 2013 - link

I'm definitely looking forward to these improvements, if they eventually arrive. I'm aware of the multiple copy solution, but if you read the Intel and Amplistore whitepapers, you will see they have very good arguments that their model works better than creating additional copies by spreading out FEC blocks across nodes. I have used ZFS for years, and while you can work around the issues, it's very clear that it's no longer evolving at the same rate since Oracle took over Sun. Products like this keep things interesting.Brutalizer - Tuesday, August 6, 2013 - link

Theory is one thing, real life another. There are many bold claims and wonderful theoretical constructs from companies, but do they hold up to scrutiny? Researchers injected artificially constructed errors in different filesystems (NTFS, Ext3, etc), and only ZFS detected all errors. Researchers have verified that ZFS seems to combat data corruption. Are there any research on Amplistore's ability to combat datacorruption? Or do they only have bold claims? Until I see research from a third part, independent part, I will continue with the free open source ZFS. CERN is now switching to ZFS for tier-1 and tier-2 long time term storage, because vast amounts of data _will_ have data corruption, CERN says. Here are research papers on data corruption on NTFS, hardware raid, ZFS, NetApp, CERN, etc:http://en.wikipedia.org/wiki/ZFS#Data_integrity

For instance, Tegile, Coraid, GreenByte, etc - are all storage vendors that offers PetaByte Enterprise servers using ZFS.

JohanAnandtech - Tuesday, August 6, 2013 - link

Thanks, very helpful feedback. I will check the paper outmikato - Thursday, August 8, 2013 - link

And Isilon OneFS? Care to review one? :)bitpushr - Friday, August 9, 2013 - link

That's because ZFS has had a minimal impact on the professional storage market.