The Impact of Disruptive Technologies on the Professional Storage Market

by Johan De Gelas on August 5, 2013 9:00 AM EST- Posted in

- IT Computing

- SSDs

- Enterprise

- Enterprise SSDs

Introduction: Enterprise Storage 101

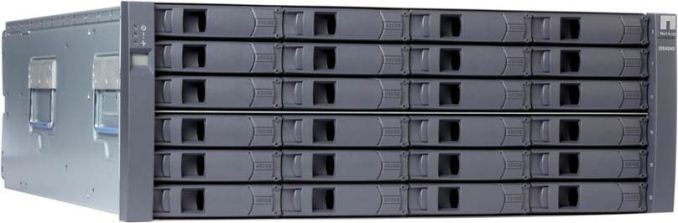

Since the introduction of x86 based servers at the end of the 20th century, the cost of server hardware has declined rapidly while the performance per watt and performance per dollar has increased rapidly. This pushed the server market to evolve from closed, proprietary, and most importantly extremely expensive mainframe and proprietary RISC servers into today's highly competitive x86 server market. However, the professional storage market is still ruled by the proprietary, legacy systems.

Today, you can get a very powerful server that can cope with most server workloads for something like $5000. Even better, you can run tens of workloads in parallel by virtualizing them. But go to the storage market with four or even five times the budget and you will likely return with a low end SAN.

Worse yet is that there is good chance this expensive device will choke regularly due to the use of a storage intensive application. We quote a market survey conducted in march 2013:

Forty-four percent of respondents said disproportionate storage-related costs were an obstacle preventing them from virtualizing more of their workloads. Forty-two percent said the same about performance degradation or the inability to meet performance expectations.

Note that the study does not mention the percentage of customers stuck in denial :-). The performance per dollar of the average SAN array is mediocre at best, and the storage capacity per dollar is simply awful.

You might think that the hardware inside a SAN is vastly superior to what can be found in your average server, but that is not the case. EMC (the market leader) and others have disclosed more than once that “the goal has always to been to use as much standard, commercial, off-the-shelf hardware as we can”. So your SAN array is probably nothing more than a typical Xeon server built by Quanta with a shiny bezel. A decent professional 1TB drive costs a few hundred dollars. Place that same drive inside a SAN appliance and suddenly the price per terabyte is multiplied by at least three, sometimes even 10! When it comes to pricing and vendor lock-in you can say that storage systems are still stuck in the “mainframe era” despite the use of cheap off-the-shelf hardware.

So why do EMC, NetApp, and the other giants in the storage market charge so much for what is essentially a Xeon based server, an admittedly well designed and reliable storage backplane, and some unreliable and slow performing hard drives? The reason is not some complex market situation that can only be explained by financial experts. No, the key is simply the basic component of a storage system: the unreliable and slow magnetic disk.

As we all know, the magnetic disk is the component that fails most in the data center, and it is by far the slowest core component in modern computers. Building a reliable and somewhat performant storage system based upon such a mediocre storage component can only be done with complex software. And complex software is yet another prime reason why IT services fail. So only a few companies that were able to build a solid reputation gained enough trust to succeed in the storage market.

This is why EMC, NetApp, IBM, and HP rule the storage market today even though they are charging an arm and a leg for a few terabytes of capacity. Professional buyers trust the devices these vendors make and are willing to pay a huge premium just to be sure that they get good reliability and decent but hardly compelling performance and capacity.

60 Comments

View All Comments

Brutalizer - Sunday, August 11, 2013 - link

bitpushr,"That's because ZFS has had a minimal impact on the professional storage market."

That is ignorant. If you had followed the professional storage market, you would have known that ZFS is the most widely deployed storage system in the Enterprise. ZFS systems manages 3-5x more data than NetApp, and manages more data than NetApp and EMC Isilon combined. ZFS is the future and eating other's cake:

http://blog.nexenta.com/blog/bid/257212/Evan-s-pre...

blak0137 - Monday, August 5, 2013 - link

The Amplidata Bitspread data protection scheme sounds alot like the OneFS filesystem on Isilon.A note on the NetApp section, the NVRAM does not store the hottest blocks, rather it is only used for correlating writes to allow destaging entire raid group wide stripes onto disk at once. This utilization of NVRAM in NetApp, along with the write characteristics of the WAFL filesystem, allows RAID-DP (NetApp's slightly customized version of RAID-6) to have similar write performance as RAID-10 with a much smaller usable space penalty up to approximately 85-90% space utilization. Read cache is always held in RAM on the controller and the FlashCache (formerly PAM) cards supplement that RAM-based cache. A thing to remember about the size of the FlashCache cards is that the space still benefits from the data efficiency features of Data OnTap, such as deduplication and compression, and as such applications such as VDI get a massive boost in performance.

enealDC - Monday, August 5, 2013 - link

I think you also need to discuss the effect of OSS or very low cost solutions that can be built on white box hardware. Those cause far greater disruptions than anything I can think of!SCST and COMSTAR to name a few.

Ammohunt - Monday, August 5, 2013 - link

One thing i didn't see mention is that in the good old days you spread the I/O out across many spindles which was a huge advantage SCSI which was geared towards such a configuration. As drive sizes have increased the spindles have reduced adding more latency. The fact is that expensive SSD type storage systems are not needed in most medium sized businesses. Their data needs can in most cases be served by spectacularly by using a well architected tiered storage model.mryom - Monday, August 5, 2013 - link

There's some thing missing - take a look at Pernix Data - That's disruptive and also vSphere 5.5 gonna be a game changer. Software Defined Storage is the way forward - We just need space for more disks in blade serversdavegraham - Tuesday, August 6, 2013 - link

SDS is an EMC-marchitecture discussion (a la ViPR). I'd suggest that you avoid conflating what a marketing talking head discusses with technology can actually do. :)Kevin G - Monday, August 5, 2013 - link

My understanding withenterprise storage isn't necessarily the hardware but rather the software interface and support that comes with it. NetApp for example will dial home and order replacements for failed hard drives for you. Various interfaces I've used allow for the logical creation multiple arrays across multiple controllers each using a different RAID type. I have no sane reason why some one would want to do that but the option is there and supported for the crazies.As far as performance goes, NVMe and SATA Express are clearly the future. I'm surprised that we haven't see any servers with hot swap mini-PCIe slots. With two lanes going to each slot, a single socket Sandy Bridge-e chip could support twenty of those small form factor cards in the front of a 1U server. At 500 GB a piece, that is 10 TB of preformatted storage, not far off of the 16 TB preformatted possible today using hard drives. Cost of course will be more expensive than disk but speeds are ludicrous.

Going with standard PCIe form factors for storage only makes sense if there are tons of channels connected to the controlller and are PCIe native. So far the majority of offers stick a hardware RAID chip with several SATA SSD controllers onto a PCIe card and call it a day.

Also for the enterprice market, it would be nice to a PCIe SSD have an out of band management port that communicates via Ethernet and can fully function if the switch on the other end supports power over ethernet. The entire host could be fried but data could still potentially be recovered. Also works great for hardware configuration like on some Areca cards.

youshotwhointhewhatnow - Monday, August 5, 2013 - link

The first link on "Cloudfounders: No More RAID" appears to be broken (http://www.amplidata.com/pdf/The-RAID Catastrophe.pdf).I read through the second link on that page (the Intel paper). I wouldn't consider that paper as unbiased considering Intel is clearly trying to use it to sell more Xeon chips. Regardless, I don't think your statement "mathematically proven that the Reed-Solomon based erasure codes of RAID 6 are a dead end road for large storage systems" is justified. Sure RAID6 will eventually give way to RAID7 (or RAIDZ2 in ZFS terms), but this still uses Reed-Solomon codes. The Intel paper just shows that RAID6+1 has much worse efficiency with slightly worse durability compared to Bitspread. The same could be said for RAID7 (instead of Bitspread), which really should have been part of the comparison.

Another strange statement in the Intel paper is "Traditional erasure coding schemes implemented by competitive storage solutions have limited device-level BER protection (e.g., 4 four bit errors per device)". Umm, with non-degraded RAID6 you could have as many UREs as you like provided less than three occur on the same stripe (or less than two for a degraded array). Again RAID7 allows even more UREs in the same stripe.

This is not to say that the Bitspread technique isn't interesting, but you seem to be a little to quick to drink the kool-aid.

name99 - Tuesday, August 6, 2013 - link

I imagine the reason people are quick to drink the koolaid is that convolutional FEC codes have proved how well they work through much wireless experience. Loss of some Amplidata data is no different from puncturing, and puncturing just works --- we experience it every time we use WiFi or cell data.I also wouldn't read too much into Intel's support here. Obviously running a Viterbi algorithm to cope with a punctured convolutional code is more work than traditional parity-type recovery --- a LOT more work. And obviously, the first round of software you write to prove to yourself that this all works, you're going to write for a standard CPU. Intel is the obvious choice, and they're going to make a big deal about how they were the obvious choice.

BUT the obvious next step is to go to Qualcomm or Broadcom and ask them to sell you a Viterbi cell, which you put on a SOC along with an ARM front-end, and hey presto --- you have a $20 chip you can stick in your box that's doing all the hard work of that $1500 Xeon.

The point is, convolutional FEC is operating on a totally different dimension from block parity --- it is just so much more sophisticated, flexible, and powerful. The obvious thing that is being trumpeted here is destruction of one of more blocks in the storage device, but that's not the end of the story. FEC can also handle point bit errors. Recall that a traditional drive (HD or SSD) has its own FEC protecting each block, but if enough point errors occur in the block, that FEC is overwhelmed and the device reports a read error. NOW there is an alternative --- the device can report the raw bad data up to a higher level which can combine it with data from other devices to run the second layer of FEC --- something like a form of Chase combining.

Convolutional codes are a good start for this, of course, but the state of the art in WiFi and telco is LDPCs, and so the actual logical next step is to create the next device based not on a dedicated convolutional SOC but on a dedicated LDPC SOC. Depending on how big a company grows, and how much clout they eventually have with SSD or HD vendors, there's scope for a whole lot more here --- things like using weaker FEC at the device level precisely because you have a higher level of FEC distributed over multiple devices --- and this may allow you a 10% or more boost in capacity.

meorah - Monday, August 5, 2013 - link

you forgot another implication of scale-out software design. namely, the ability to bypass flash completely and store your most performance intensive workloads that use your most expensive software licensing directly in-memory. 16 gigs to run the host, the other 368 gigs as a nice RAM drive.