The Impact of Disruptive Technologies on the Professional Storage Market

by Johan De Gelas on August 5, 2013 9:00 AM EST- Posted in

- IT Computing

- SSDs

- Enterprise

- Enterprise SSDs

Nutanix: No More SAN

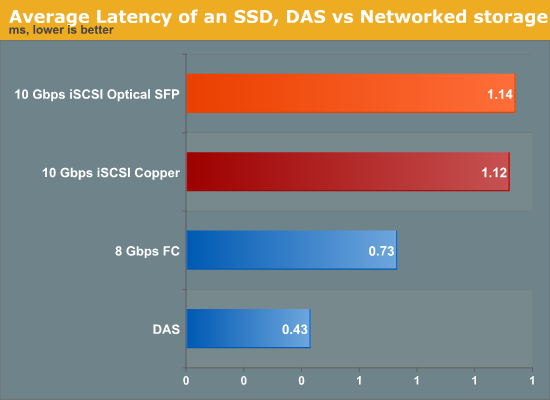

It is not a secret that even though a SAN comes with all the virtues of centralized data, network storage comes with a network bandwidth and latency penalty. By simply attaching a flash array directly to a system (DAS), we can measure the extra latency compared to SAN (Storage Area Network). It amounts to between 0.3 and 0.8 ms depending on whether you use Fibre Channel or iSCSI over copper wires.

So the minimal latency was 50% to 100% higher in a lightly loaded SAN than when the same SSD was running inside the server. However, this was the minimal latency. This can quickly grow to several milliseconds when the network load goes up.

Nutanix believes that virtualized servers should use local storage, clustered together in a virtual storage pool. Each of the virtual machines connects to a storage VM. That storage VM is typically an iSCSI target inside a VM, also called a VSA or Virtual Storage Appliance. The VSA on each server node are clustered together by the Nutanix Distributed File System (NDFS). NDFS makes sure that if one node dies, the other nodes are still able to access the necessary files to run.

The VSA also leverages the latest flash technology. The most accessed data is on a Fusion-IO or Intel S3700 SSDs, depending on the Nutanix node model. The “colder” (not frequently accessed) data is automatically transferred to the SATA terabyte disks. It's basically another level of caching, only with larger data caches than we see in the desktop world.

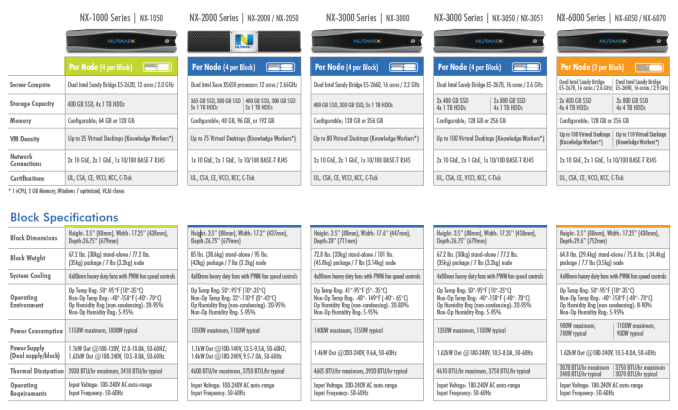

Using what seems to be a Supermicro Twin or Twin² chassis, even an entry level four node Nutanxi NX-3050 should support up to 400 virtual desktops with a power consumption of about 1.1 KW. Compare this with your typical SAN array that typically needs 700W just for one midrange array, and you probably need several expansion modules before you can even think about supporting 400 virtual desktops.

Unfortunately, we cannot verify the claims of Nutanix right now, but our experience tells us that from a power and performance point of view it will be very hard for the typical "server plus SAN infrastructure" to beat the much simpler “integrate everything inside a dense server” platform. The only disadvantage is that the number of DIMM slots inside such server nodes is limited. That is why even the largest Nutanix hosts do not support more than 256GB per node, which might be a limitation in some virtualization environments.

Starting at $22000 per node, the Nutanix nodes are hardly cheap, but since you don’t need a SAN the total investment is a lot lower than the traditional approach, especially for virtual desktops. Nutanix seems to have convinced quite a few people as it claims it is the fastest growing IT infrastructure startup ever, with an $80 million annual run rate. Now they just need to prove they have the reliability and support infrastructure to win over additional customers.

_575px.jpg)

60 Comments

View All Comments

WeaselITB - Tuesday, August 6, 2013 - link

Fascinating perspective piece. I look forward to the CouldFounders review -- that stuff seems pretty interesting.Thanks,

-Weasel

shodanshok - Tuesday, August 6, 2013 - link

Very interesting article. It basically match my personal option on SAN market: it is an overprice one, with much less performance per $$$ then DAS.Anyway, with the advent of thin pools / thin volumes in RHEL 6.4 and dmcache in RHEL 7.0, commodity, cheap Linux distribution (CentOS costs 0, by the way) basically matche the feature-set exposed by most low/mid end SAN. This means that a cheap server with 12-24 2.5'' bays can be converted to SAN-like works, with very good results also.

In this point of view, the recent S3500 / Crucial M500 disks are very interesting: the first provide enterprise-certified, high performance, yet (relatively) low cost storage, and the second, while not explicitly targeted at the enterprise market, is available at outstanding capacity/cost ratio (the 1TB version is about 650 euros). Moreover it also has a capacitor array to prevent data loss in the case of power failure.

Bottom line: for high performance, low cost storage, use a Linux server with loads of SATA SSDs. The only drawback is that you _had_ to know the VGS/LVS cli interface, because good GUIs tend to be commercial products and, anyway, for data recovery the cli remains your best friend.

A note on the RAID level: while most sysadmins continue to use RAID5/6, I think it is really wrong in most cases. The R/M/W penalty is simply too much on mechanincal disks. I've done some tests here: http://www.ilsistemista.net/index.php/linux-a-unix...

Maybe on SSDs the results are better for RAID5, but the low-performance degraded state (and very slow/dangerous reconstruction process) ramain.

Kyrra1234 - Wednesday, August 7, 2013 - link

The enterprise storage market is about the value-add you get from buying from the big name companies (EMC, Netapp, HP, etc...). All of those will come with support contracts for replacement gear and to help you fix any problems you may run into with the storage system. I'd say the key reasons to buy from some of these big players:* Let someone else worry about maintaining the systems (this is helpful for large datacenter operations where the customer has petabytes of data).

* The data reporting tools you get from these companies will out-shine any home grown solution.

* When something goes wrong, these systems will have extensive logs about what happened, and those companies will fly out engineers to rescue your data.

* Hardware/Firmware testing and verification. The testing that is behind these solutions is pretty staggering.

For smaller operations, rolling out an enterprise SAN is probably overkill. But if your data and uptime is important to you, enterprise storage will be less of a headache when compared to JBOD setups.

Adul - Wednesday, August 7, 2013 - link

We looked at Fusion-IO ioDrive and decided not to go that route as the work loads presented by virtualize desktops we offer would have killed those units in a heartbeat. We opted instead for a product by greenbytes for our VDI offering.Adul - Wednesday, August 7, 2013 - link

See if you can get one of these devices for review :)http://getgreenbytes.com/solutions/vio/

we have hundreds of VDI instances running on this.

Brutalizer - Sunday, August 11, 2013 - link

These Greenbyte servers are running ZFS and Solaris (illumos)http://www.virtualizationpractice.com/greenbytes-a...

Brutalizer - Sunday, August 11, 2013 - link

GreenByte:http://www.theregister.co.uk/2012/10/12/greenbytes...

Also, Tegile is using ZFS and Solaris:

http://www.theregister.co.uk/2012/06/01/tegile_zeb...

Who said ZFS is not the future?

woogitboogity - Sunday, August 11, 2013 - link

If there is one thing I absolutely adore about real capitalism it is these moments where the establishment goes down in flames. Just the thought of their jaws dropping and stammering "but that's not fair!" when they themselves were making mockery of fair prices with absurd profit margins... priceless. Working with computers gives you so very many of these wonderful moments of truth...On the software end it is almost as much fun as watching plutocrats and dictators alike try to "contain" or "limit" TCP/IP's ability to spread information.

wumpus - Wednesday, August 14, 2013 - link

There also seems to be a disconnect in what Reed-Solomon can do and what they are concerned about (while RAID 6 uses Reed Solomon, it is a specific application and not a general limitation).It is almost impossible to scale rotating discs (presumably magnetic, but don't ignore optical forever) to the point where Reed-Solomon becomes an issue. The basic algorithm scales (easily) to 256 disks (or whatever you are striping across) of which typically you want about 16 (or less) parity disks. Any panic over "some byte of data was mangled while a drive died" just means you need to use more parity disks. Somehow using up all 256 is silly (for rotating media) as few applications access data in groups of 256 sectors a time (current 1MB, possibly more by the time somebody might consider it).

All this goes out the window if you are using flash (and can otherwise deal with the large page clear requirement issue), but I doubt that many are up to such large sizes yet. If extreme multilevel optical disks ever take over, things might get more interesting on this front (I will still expect Reed Solomon to do well, but eventually things might reach the tipping point).

equals42 - Saturday, August 17, 2013 - link

The author misunderstands how NetApp uses NVRAM. NVRAM is not a cache for the hottest data. Writes are always to DRAM memory. The writes are committed to NVRAM (which is mirrored to another controller) before being acknowledged to the host but the write IO and its commitment to disk or SSD via WAFL sequential CP writes is all from DRAM. While any data remains in DRAM, it can be considered cached but the contents of NVRAM do not constitute nor is it used for caching for host reads.NVRAM is only to make sure that no writes are ever lost due to a controller loss. This is important to recognize since most mid-range systems (and all the low-end ones I've investigated) do NOT protect from write losses in event of failure. Data loss like this can lead to corruption in block-based scenarios and database corruption in nearly any scenario.