They're (Almost) All Dirty: The State of Cheating in Android Benchmarks

by Anand Lal Shimpi & Brian Klug on October 2, 2013 12:30 PM EST- Posted in

- Smartphones

- Samsung

- Mobile

- galaxy note 3

Thanks to AndreiF7's excellent work on discovering it, we kicked off our investigations into Samsung’s CPU/GPU optimizations around the international Galaxy S 4 in July and came away with a couple of conclusions:

1) On the Exynos 5410, Samsung was detecting the presence of certain benchmarks and raising thermal limits (and thus max GPU frequency) in order to gain an edge on those benchmarks, and

2) On both Snapdragon 600 and Exynos 5410 SGS4 platforms, Samsung was detecting the presence of certain benchmarks and automatically driving CPU voltage/frequency to their highest state right away. Also on Snapdragon platforms, all cores are plugged in immediately upon benchmark detect.

The first point applied exclusively to the Exynos 5410 equipped version of the Galaxy S 4. We did a lot of digging to confirm that max GPU frequency (450MHz) was never exceeded on the Snapdragon 600 version. The second point however applied to many, many more platforms.

The table below is a subset of devices we've tested, the silicon inside, and whether or not they do a benchmark detect and respond with a max CPU frequency (and all cores plugged in) right away:

| I Can't Believe I Have to Make This Table | |||||||||||

| Device | SoC | Cheats In | |||||||||

| 3DM | AnTuTu | AndEBench | Basemark X | Geekbench 3 | GFXB 2.7 | Vellamo | |||||

| ASUS Padfone Infinity | Qualcomm Snapdragon 800 | N | Y | N | N | N | N | Y | |||

| HTC One | Qualcomm Snapdragon 600 | Y | Y | N | N | N | Y | Y | |||

| HTC One mini | Qualcomm Snapdragon 400 | Y | Y | N | N | N | Y | Y | |||

| LG G2 | Qualcomm Snapdragon 800 | N | Y | N | N | N | N | Y | |||

| Moto RAZR i | Intel Atom Z2460 | N | N | N | N | N | N | N | |||

| Moto X | Qualcomm Snapdragon S4 Pro | N | N | N | N | N | N | N | |||

| Nexus 4 | Qualcomm APQ8064 | N | N | N | N | N | N | N | |||

| Nexus 7 | Qualcomm Snapdragon 600 | N | N | N | N | N | N | N | |||

| Samsung Galaxy S 4 | Qualcomm Snapdragon 600 | N | Y | Y | N | N | N | Y | |||

| Samsung Galaxy Note 3 | Qualcomm Snapdragon 800 | Y | Y | Y | Y | Y | N | Y | |||

| Samsung Galaxy Tab 3 10.1 | Intel Atom Z2560 | N | Y | Y | N | N | N | N | |||

| Samsung Galaxy Note 10.1 (2014 Edition) | Samsung Exynos 5420 | Y(1.4) | Y(1.4) | Y(1.4) | Y(1.4) | Y(1.4) | N | Y(1.9) | |||

| NVIDIA Shield | Tegra 4 | N | N | N | N | N | N | N | |||

We started piecing this data together back in July, and even had conversations with both silicon vendors and OEMs about getting it to stop. With the exception of Apple and Motorola, literally every single OEM we’ve worked with ships (or has shipped) at least one device that runs this silly CPU optimization. It's possible that older Motorola devices might've done the same thing, but none of the newer devices we have on hand exhibited the behavior. It’s a systemic problem that seems to have surfaced over the last two years, and one that extends far beyond Samsung.

Looking at the table above you’ll also notice weird inconsistencies about the devices/OEMs that choose to implement the cheat/hack/festivities. None of the Nexus do, which is understandable since the optimization isn't a part of AOSP. This also helps explain why the Nexus 4 performed so slowly when we reviewed it - this mess was going on back then and Google didn't partake. The GPe versions aren't clean either, which makes sense given that they run the OEM's software with stock Android on top.

LG’s G2 also includes some optimizations, just for a different set of benchmarks. It's interesting that LG's optimization list isn't as extensive as Samsung's - time to invest in more optimization engineers? LG originally indicated to us that its software needed some performance tuning, which helps explain away some of the G2 vs Note 3 performance gap we saw in our review.

The Exynos 5420's behavior is interesting here. Instead of switching over to the A15 cluster exclusively it seems to alternate between running max clocks on the A7 cluster and the A15 cluster.

Note that I’d also be careful about those living in glass houses throwing stones here. Even the CloverTrail+ based Galaxy Tab 3 10.1 does it. I know internally Intel is quite opposed to the practice (as I’m assuming Qualcomm is as well), making this an OEM level decision and not something advocated by the chip makers (although none of them publicly chastise partners for engaging in the activity, more on this in a moment).

The other funny thing is the list of optimized benchmarks changes over time. On the Galaxy S 4 (including the latest updates to the AT&T model), 3DMark and Geekbench 3 aren’t targets while on the Galaxy Note 3 both apps are. Due to the nature of the optimization, the benchmark whitelist has to be maintained (now you need your network operator to deliver updates quickly both for features and benchmark optimizations!).

There’s minimal overlap between the whitelisted CPU tests and what we actually run at AnandTech. The only culprits on the CPU side are AndEBench and Vellamo. AndEBench is an implementation of CoreMark, something we added in more as a way of looking at native vs. java core performance and not indicative of overall device performance. I’m unhappy that AndEBench is now a target for optimization, but it’s also not unexpected. So how much of a performance uplift does Samsung gain from this optimization? Luckily we've been testing AndEBench V2 for a while, which features a superset of the benchmarks used in AndEBench V1 and is of course built using a different package name. We ran the same native, multithreaded AndEBench V1 test using both apps on the Galaxy Note 3. The difference in scores is below:

| Galaxy Note 3 Performance in AndEBench | |||||

| AndEBench V1 | AndEBench V2 running V1 Workload | Perf Increase | |||

| Galaxy Note 3 | 16802 | 16093 | +4.4% | ||

There's a 4.4% increase in performance from the CPU optimization. Some of that gap is actually due to differences in compiler optimizations (V1 is tuned by the OEMs for performance, V2 is tuned for compatibility as it's still in beta). As expected, we're not talking about any tremendous gains here (at least as far as our test suite is concerned) because Samsung isn't actually offering a higher CPU frequency to the benchmark. All that's happening here is the equivalent of a higher P-state vs. letting the benchmark ramp to that voltage/frequency pair on its own. We've already started work on making sure that all future versions of benchmarks we get will come with unique package names.

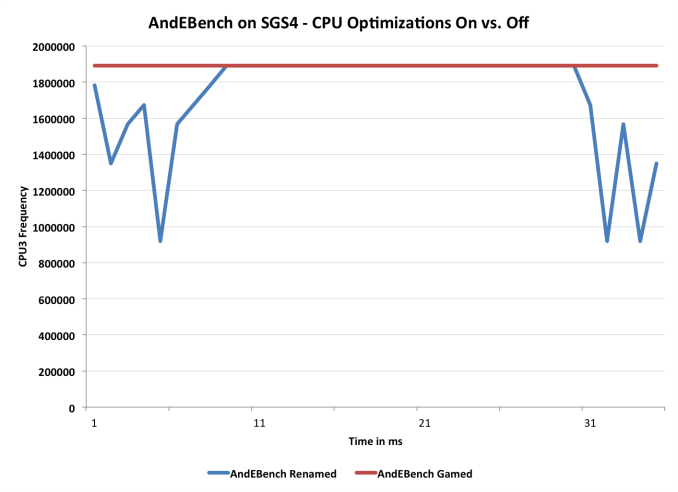

I graphed the frequency curve of a Snapdragon 600 based Galaxy S 4 while running both versions of AndEBench to illustrate what's going on here:

We're looking at is a single loop of the core AndEBench MP test. The blue line indicates what happens naturally, while the red line shows what happens with the CPU governor optimization enabled. Note the more gradual frequency ramp up/down. In the case of this test, all you're getting is the added performance during that slow ramp time. For benchmarks that repeat many tiny loops, these differences could definitely add up. In situations where everyone is shipping the same exact hardware, sometimes that extra few percent is enough to give the folks in marketing a win, which is why any of this happens in the first place.

Even when the Snapdragon 600 based SGS4 recognizes AndEBench it doesn't seem to get in the way of thermal throttling. A few runs of the test and I saw clock speeds drop down to under 1.7GHz for a relatively long period of time before ramping back up. I should note that the power/thermal profiles do look different when you let the governor work its magic vs. overriding things, which obviously also contributes to any performance deltas.

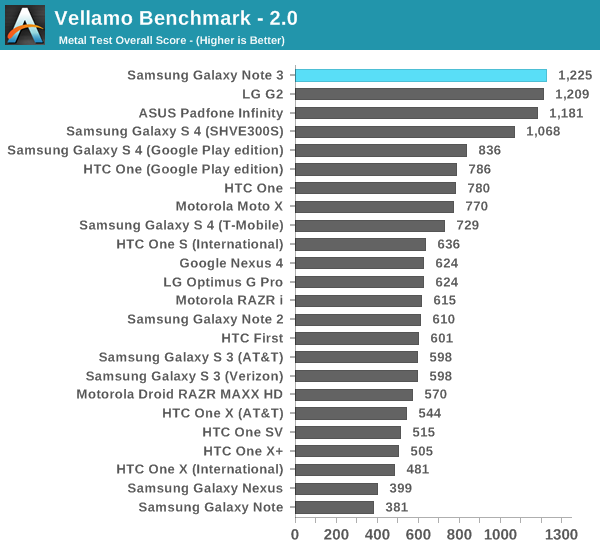

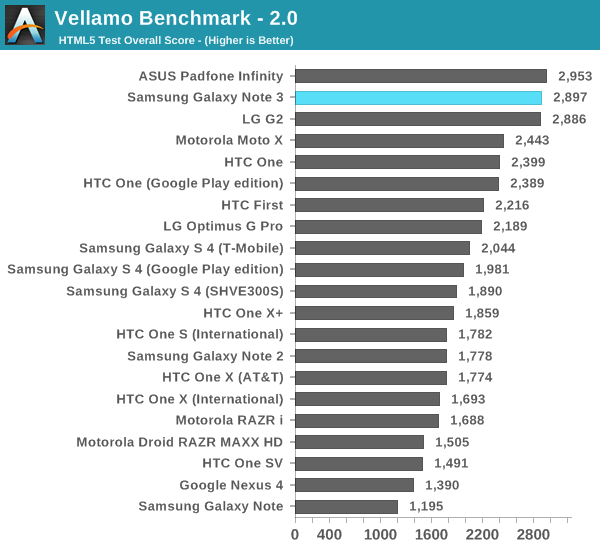

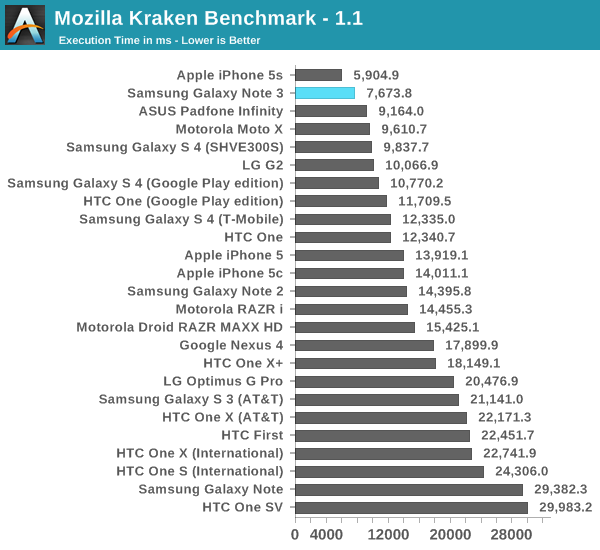

Vellamo is interesting as all of the flagships seem to game this test, which sort of makes the point of the optimization moot:

Any wins the Galaxy Note 3 achieves in our browser tests are independent of the CPU frequency cheat/optimization discussed above. It’s also important to point out that this is why we treat our suite as a moving target. I introduced Kraken into the suite a little while ago because I was worried that SunSpider was becoming too much of a browser optimization target. The only realistic solution is to continue to evolve the suite ahead of those optimizing for it. The more attention you draw to certain benchmarks, the more likely they are to be gamed. We constantly play this game of cat and mouse on the PC side, it’s just more frustrating in mobile since there aren’t many good benchmarks to begin with. Note that pretty much every CPU test that’s been gamed at this point isn’t a good CPU test to begin with.

Don’t forget that we’re lucky to be able to so quickly catch these things. After our piece in July I figured one of two things would happen: 1) the optimizations would stop, or 2) they would become more difficult to figure out. At least in the near term, it seems to be the latter. The framework for controlling all of this has changed a bit, and I suspect it’ll grow even more obfuscated in the future. There’s no single solution here, but rather a multi-faceted approach to make sure we're ahead of the curve. We need to continue to rev our test suite to stay ahead of any aggressive OEM optimizations, we need to petition the OEMs to stop this madness, we need to work with the benchmark vendors to detect and disable optimizations as they happen and avoid benchmarks that are easily gamed. Honestly this is the same list of things we do on the PC side, so we've been doing it in mobile as well.

The Relationship Between CPU Frequency and GPU Performance

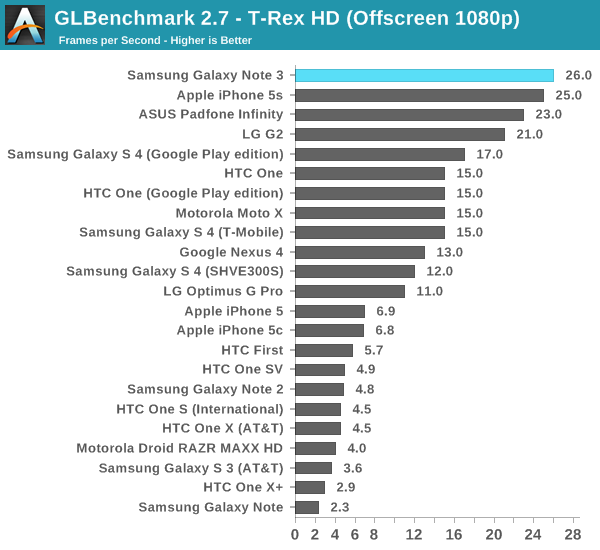

On the GPU front is where things are trickier. GFXBench 2.7 (aka GLBenchmark) somehow avoids being an optimization target, at least for the CPU cheat we’re talking about here. There are always concerned about rendering accuracy, dropping frames, etc... but it looks like the next version of GFXBench will help make sure that no one is doing that quite yet. Kishonti (the makers of GFX/GLBench) work closely with all of the OEMs and tend to do a reasonable job at keeping everyone honest but they’re in a tricky spot as the OEMs also pay for the creation of the benchmark (via licensing fees to use it). Running a renamed version of GFXBench produced similar scores to what we already published on the Note 3, which ends up being a bit faster than what LG’s G2 was able to deliver. As Brian pointed out in his review however, there are driver version differences between the platforms as well as differences in VRAM sizes (thanks to the Note 3's 3GB total system memory):

Note 3: 04.03.00.125.077

Padfone: 04.02.02.050.116

G2: 4.02.02.050.141

Also keep in mind that both LG and Samsung will define their own governor behaviors on top of all of this. Even using the same silicon you can choose different operating temperatures you’re comfortable with. Of course this is another variable to game (e.g. increasing thermal headroom when you detect a benchmark), but as far as I can tell even in these benchmark modes thermal throttling does happen.

The two new targets are tests that we use: 3DMark and Basemark X. The latter tends to be quite GPU bound, so the impact of a higher CPU frequency is more marginalized, but with a renamed version we can tell for sure:

| Galaxy Note 3 Performance in Basemark X | |||||

| Basemark X | Basemark X - Renamed | Perf Increase | |||

| On screen | 16.036 fps | 15.525 fps | +3.3% | ||

| Off screen | 13.528 fps | 12.294 fps | +10% | ||

The onscreen differences make sense to me, it's the off screen results that are a bit puzzling. I'm worried about what's going on with the off-screen rendering buffer. That seems to be too little of a performance increase if the optimization was dropping frames (if you're going to do that, might as well go for the gold), but as to what is actually going on I'm not entirely sure. We'll keep digging on this one. The CPU optimization alone should net something around the 3% gain we see in the on screen test.

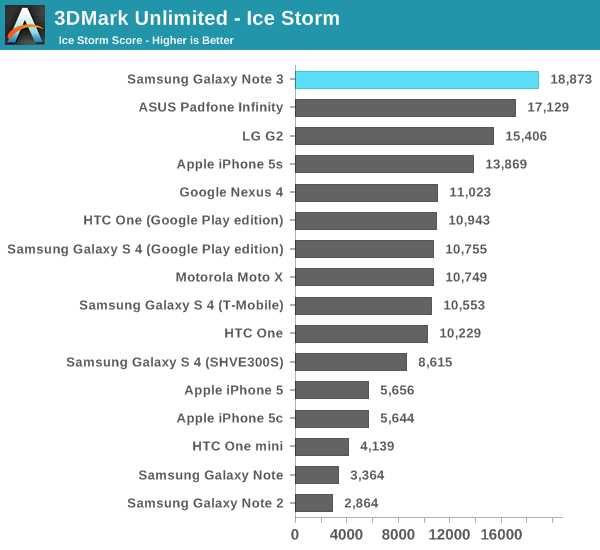

3DMark is a bigger concern. As we discovered in our Moto X review, 3DMark is a much more balanced CPU/GPU test. Driving CPU frequencies higher can and will impact the overall scores here.

ASUS thankfully doesn’t do any of this mess with their Padfone Infinity in the GPU tests. Note that there are still driver and video memory differences between the Padfone Infinity and the Galaxy Note 3, but we’re seeing roughly a 10% performance advantage in the overall 3DMark Extreme score (the Padfone also has a slightly lower clocked CPU - 2.2GHz vs. 2.3GHz). It's tough to say how much of this is due to the CPU optimization vs. how much is up to driver and video memory differences (we're working on a renamed version of 3DMark to quantify this exactly).

The Futuremark guys have a lot of experience with manufacturers trying to game their benchmarks so they actually call out this specific type of optimization in their public rules:

"With the exception of setting mandatory parameters specific to systems with multiple GPUs, such as AMD CrossFire or NVIDIA SLI, drivers may not detect the launch of the benchmark executable and alter, replace or override any parameters or parts of the test based on the detection. Period."

If I'm reading it correctly, both HTC and Samsung are violating this rule. What recourse Futuremark has against the companies is up in the air, but here we at least have a public example of a benchmark vendor not being ok with what's going on.

Note that GFXBench 2.7, where we don't see anyone run the CPU optimization, shows a 13% advantage for the Note 3 vs. Padfone Infinity. Just like the Exynos 5410 optimization there simply isn't a lot to be gained by doing this, making the fact that the practice is so widespread even more frustrating.

Final Words

As we mentioned back in July, all of this is wrong and really isn't worth the minimal effort the OEMs put into even playing these games. If I ran the software group at any of these companies running the cost/benefit analysis on chasing these optimizations vs. negativity in the press it’d be an easy decision (not to mention the whole morality argument). It's also worth pointing out that nearly almost all Android OEMs are complicit in creating this mess. We singled out Samsung for the initial investigation as they were doing something unique on the GPU front that didn't apply to everyone else, but the CPU story (as we mentioned back in July) is a widespread problem.

Ultimately the Galaxy Note 3 doesn’t change anything from what we originally reported. The GPU frequency optimizations that existed in the Exynos 5410 SGS4 don’t exist on any of the Snapdragon platforms (all applications are given equal access to the Note 3’s 450MHz max GPU frequency). The CPU frequency optimization that exists on the SGS4, LG G2, HTC One and other Android devices, still exists on the Galaxy Note 3. This is something that we’re going to be tracking and reporting more frequently, but it’s honestly no surprise that Samsung hasn’t changed its policies here.

The majority of our tests aren’t impacted by the optimization. Virtually all Android vendors appear to keep their own lists of applications that matter and need optimizing. The lists grow/change over time, and they don’t all overlap. With these types of situations it’s almost impossible to get any one vendor to be the first to stop. The only hope resides in those who don’t partake today, and of course with the rest of the ecosystem.

We’ve been working with all of the benchmark vendors to try and stay one step ahead of the optimizations as much as possible. Kishonti is working on some neat stuff internally, and we’ve always had a great relationship with all of the other vendors - many of whom are up in arms about this whole thing and have been working on ways to defeat it long before now. There’s also a tremendous amount of pressure the silicon vendors can put on their partners (although not quite as much as in the PC space, yet), not to mention Google could try to flex its muscle here as well. The best we can do is continue to keep our test suite a moving target, avoid using benchmarks that are very easily gamed and mostly meaningless, continue to work with the OEMs in trying to get them to stop (though tough for the international ones) and work with the benchmark vendors to defeat optimizations as they are discovered. We're presently doing all of these things and we have no plans to stop. Literally all of our benchmarks have either been renamed or are in the process of being renamed to non-public names in order to ensure simple app detects don't do anything going forward.

The unfortunate reality is this is all going to get a lot worse before it gets better. We wondered what would happen with the next platform release after our report in July, and the Note 3 told us everything we needed to know (you could argue that it was too soon to incite change, perhaps SGS5 next year is a better test). Going forward I expect all of this to become more heavily occluded from end user inspection. App detects alone are pretty simple, but what I expect to happen next are code/behavior detects and switching behavior based on that. There are thankfully ways of continuing to see and understand what’s going on inside these closed platforms, so I’m not too concerned about the future.

The hilarious part of all of this is we’re still talking about small gains in performance. The impact on our CPU tests is 0 - 5%, and somewhere south of 10% on our GPU benchmarks as far as we can tell. I can't stress enough that it would be far less painful for the OEMs to just stop this nonsense and instead demand better performance/power efficiency from their silicon vendors. Whether the OEMs choose to change or not however, we’ve seen how this story ends. We’re very much in the mid-1990s PC era in terms of mobile benchmarks. What follows next are application based tests and suites. Then comes the fun part of course. Intel, Qualcomm and Samsung are all involved in their own benchmarking efforts, many of which will come to light over the coming years. The problem will then quickly shift from gaming simple micro benchmarks to which “real world” tests are unfairly optimized which architectures. This should all sound very familiar. To borrow from Brian’s Galaxy Gear review (and BSG): “all this has happened before, and all of it will happen again.”

374 Comments

View All Comments

Wilco1 - Wednesday, October 2, 2013 - link

It's not cheating simply because it's the benchmarks that are broken. No need to invoke philosophy!Any benchmark that runs for only a few milliseconds does not represent a real workload. Anything that runs long enough (several seconds at least) will always run at the maximum frequency (and thus gives the correct score without any boosting). Ie. the boosting is only required to get the correct result in broken benchmarks that run for short periods.

So rather than incorrectly calling out Samsung and others for cheating, we should call out those rubbish benchmarks and retire them: AndEBench, AnTuTu, Sunspider, the list is long...

virtual void - Thursday, October 3, 2013 - link

What is more similar to the workload that most users will actually run on their phones:* long running tasks that has the CPU and/or GPU pegged at 100%

* short events that need to deliver the result as fast as possible

I know you hate AnTuTu, but that benchmark runs things that tries to emulate the behavior of mobil applications. Not something you can use to compare different CPU architectures, but it tells you more about your user experience compared to benchmarks like Geekbench (which btw has an average L1$ hit rate of 95-97% on Sandy Bridge, not really what you would see on "real" applications).

Wilco1 - Friday, October 4, 2013 - link

Rendering a typical complex webpage is a long running task which can keep multiple CPUs occupied. It's certainly not something that just takes 25ms before it is finished like some of these micro benchmarks.AnTuTu doesn't run anything at all like mobile applications. For example the integer test is simply the 20 year old ByteMark which was never regarded as a mobile phone benchmark, let alone as a good benchmark. The fact that the developer doesn't even understand the difference between addition and geomean really says it all.

No benchmark gives an approximation of the "user experience" of a GUI. That's something that is extremely subjective and typically completely unrelated to CPU performance (people want things like an immediate feedback when they click on something and smooth animations - these are all GUI design related).

So all we can do is measure maximum CPU and GPU performance, and that is exactly what current benchmarks try to do, for better or worse. Geekbench is certainly one of the best ones we have right now, and AnTuTu one of the worst (especially given all the cheating we have seen there).

akdj - Wednesday, October 16, 2013 - link

Understand your concern with AnTuTu. And totally agree. However, others like G/B and their crew that's 'understanding' the need and desire for benchmarking mobile performance in a 'meaningful' way--@ least one that can be 'felt' and immediately observed by the end user. Fluid GUI. Instant response from the 'tap', no lag while surfing, reading, multitasking or the transition from putting the caller on speaker and looking up show times on Flixter being as easy to do as it sounds (this is remarkably fluid, fast and efficient on AT&T with the iPhone 5 & 5s...my Note 2 though has challenges). Geekbench is upping the ante so to speak and responding to the reviewers and concerned hobbyists and technical folkThat said...we are literally 50 months into 'reasonable and comparable' performance in mobile to really GAS, right? I mean....benchmarking the original Treo or the older blackberry devices...was that an actual thing? Or at that time didn't we chose our platform and just get what we could afford? I'm 42. They weren't around in high school...and when they became affordable and reasonable and Nokia ran the world, I bought it. Probably <20 years ago. Never bought a phone. Just got the new 'free' with contract flip phone. I even remember wondering why a camera on the phone was necessary as short a 7 or 8 years ago.

In the past 4 years, Moore's Law hasn't necessarily left the 'computer' world but it's certainly evident in the mobile sector...and a reason Intel is in hyperdrive to create and build out high performance /low energy silicon and SOCs. Something they've become adept to with the iGPU in the last several years

These tests are still benchmarks AND still relevant PRECISELY because of 'where and when we are' in mobile technology. Hard to believe just 6 years ago....we didn't consider our Blackberry or Treo an actual computer in our pocket...they had shitty and slow processors, data was at a snail's pace....no apps not provided by the carrier or OEM (Snake, anyone?).

We are in the infancy of mobile computing. Phones and tablets alike. Processing both CPU and GPU as well as memory control continue to 'double ever 12-18 months'. Apple has started something I'm sure Google and Samsung and Sony will all grab on to over the next 5 years. Creating their own chips and instruction set...hopefully Google will standup to the carriers and strip TouchWiz from Sammy....lots of changes in the interim

For the time being though...Sunspider is an extremely valuable test as these ARE pocket computers. Accessing sites, graphic performance and central processing...and geekbench's awareness, we'll get there. You're a familiar responder. I don't know that I've read an Anand review in three years that doesn't mention if not beg for better and more specific testing. For today though, we have what we have and it's (as the guys and gals at Geekbench will tell you) not a lucrative business. Just take a gander at any specific machine, CPU or GPU and notice the '32 bit' majority. Even getting folks to pay for the 64 bit version....maybe $10/$12? It's like pulling teeth. Only the true 'geeks' and 'real' reviewers will spend the money. Would be awesome if someone here, keeping up to date with technology and readying their master's thesis would come up with, code and develop a true 'cross platform, cross SoC' benchmarking app. Until then, nothing will EVER compare to end user experience. Lack do issues. Quantity of quality apps and last but certainly not least, accessible and helpful customer support

Again, for many, their smartphone HAS become their personal computer. In the truest sense of the word....when you look at what AOL was doing to those that 'didn't get it's just a decade ago. More software than any point in computing history. Gaming at reasonable prices. Extremely good battery life and amazing displays.

While I love reading a lot of the reviews and comments here at Anandtech...sometimes I have to remind myself....benchmarks, while an objective measure of performance is nothing when compared to the analysis and the 'words' outside of the little b/m charts that matter. The 'subjective' user experience felt and shared by the author

Tl/dr--- we have what we have. It's early in mobile...and it's the wave do the computing future for most folks outside of 'work'. Until someone codes better tools to measure the objective performance, subjective performance will have 'to do' ;-)

KPOM - Thursday, October 3, 2013 - link

I disagree. Few people are going to base a purchase solely on a benchmark, but it is still a valid datapoint if we can rely on them. Benchmarks are supposed to replicate real world behavior. Obviously everyone's mileage will vary, but gaming benchmarks serves no valid purpose whatsoever. The way Samsung did it, no developer can benefit. Samsung just identifies the app and if it detects a benchmark disables all the power management features to make the phone seem faster than it really is.Wilco1 - Thursday, October 3, 2013 - link

You're thinking of it from the wrong way. Samsung is actually enabling to run the benchmarks correctly at their real speed. So the phone does not really seem faster than it really is, as it is the correct speed. The reason the original benchmarks run slower is because they are badly written (for example when benchmarking professionals always do a warmup run first, run for at least several seconds, run several times and then take the fastest run).bah12 - Friday, October 4, 2013 - link

Sorry but you are the one that has it backward. Samsung is ONLY letting benchmarks run at this speed. Technically yes it is the fastest speed the chip can run, but the code only let's that particular app do it. It is presenting data about the device that is not attainable by a 3rd party app.Wilco1 - Friday, October 4, 2013 - link

No. Any application can run at the maximum clockspeed. Whether it is boosted or not makes no difference at all. This is exactly why it is not cheating - there is no overclocking going on.The boosting simply means that the benchmark runs at the maximum speed from start to finish rather than letting frequency increase from whatever it previously was to the maximum (this takes some time if you were idling before starting the benchmark). You can see this clearly happening in the AndEBench graph in the article. The non-boosted version runs ~80% of the time at the maximum frequency, the boosted 100%. The performance difference due to this was 4.4%.

akdj - Wednesday, October 16, 2013 - link

"Any application can run at the maximum clockspeed"Really? In the real world? Why then have the only 'triggers' to the CPU governor been specific to the only major benchmarking suites used ubiquitously by all of the review sites, tech blogs? It's impossible to get that same power from Asphalt 8? Editing and manipulation of photos and videos...why? Maybe if because 'if allowed' the battery would be dead in 48 minutes of gaming....or an hour and a half playing in Instagram? I'm genuinely curious why you seem to be the only one defending this type of bullshit? Genuinely

thesglife - Saturday, October 5, 2013 - link

Yes, an asterisk would be appropriate.