NVIDIA Kepler Cards Get HDMI 4K@60Hz Support (Kind Of)

by Ryan Smith on June 20, 2014 10:00 AM EST

An interesting feature has turned up in NVIDIA’s latest drivers: the ability to drive certain displays over HDMI at 4K@60Hz. This is a feat that would typically require HDMI 2.0 – a feature not available in any GPU shipping thus far – so to say it’s unexpected is a bit of an understatement. However as it turns out the situation is not quite cut & dry as it first appears, so there is a notable catch.

First discovered by users, including AT Forums user saeedkunna, when Kepler based video cards using NVIDIA’s R340 drivers are paired up with very recent 4K TVs, they gain the ability to output to those displays at 4K@60Hz over HDMI 1.4. These setups were previously limited to 4K@30Hz due to HDMI bandwidth availability, and while those limitations haven’t gone anywhere, TV manufacturers and now NVIDIA have implemented an interesting workaround for these limitations that teeters between clever and awful.

Lacking the available bandwidth to fully support 4K@60Hz until the arrival of HDMI 2.0, the latest crop of 4K TVs such as the Sony XBR 55X900A and Samsung UE40HU6900 have implemented what amounts to a lower image quality mode that allows for a 4K@60Hz signal to fit within HDMI 1.4’s 8.16Gbps bandwidth limit. To accomplish this, manufacturers are making use of chroma subsampling to reduce the amount of chroma (color) data that needs to be transmitted, thereby freeing up enough bandwidth to increase the image resolution from 1080p to 4K.

An example of a current generation 4K TV: Sony's XBR 55X900A

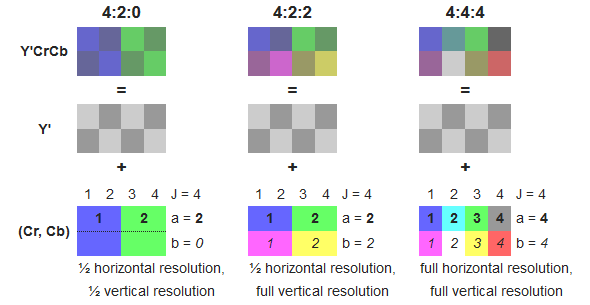

Specifically, manufacturers are making use of Y'CbCr 4:2:0 subsampling, a lower quality sampling mode that requires ¼ the color information of regular Y'CbCr 4:4:4 sampling or RGB sampling. By using this sampling mode manufacturers are able to transmit an image that utilizes full resolution luma (brightness) but a fraction of the chroma resolution, allowing manufacturers to achieve the necessary bandwidth savings.

Wikipedia: diagram on chroma subsampling

The use of chroma subsampling is as old as color television itself, however the use of it in this fashion is uncommon. Most HDMI PC-to-TV setups to date use RGB or 4:4:4 sampling, both of which are full resolution and functionally lossless. 4:2:0 sampling on the other hand is not normally used for the last stage of transmission between source and sink devices – in fact HDMI didn’t even officially support it until recently – and is instead used in the storage of source material itself, be it Blu-Ray discs, TV broadcasts, or streaming videos.

Perceptually 4:2:0 is an efficient way to throw out unnecessary data, making it a good way to pack video, but at the end of the day it’s still ¼ the color information of a full resolution image. Since video sources are already 4:2:0 this ends up being a clever way to transmit video to a TV, as at the most basic level a higher quality mode would be redundant (post-processing aside). But while this works well for video it also only works well for video; for desktop workloads it significantly degrades the image as the color information needed to drive subpixel-accurate text and GUIs is lost.

In any case, with 4:2:0 4K TVs already on the market, NVIDIA has confirmed that they are enabling 4:2:0 4K output on Kepler cards with their R340 drivers. What this means is that Kepler cards can drive 4:2:0 4K TVs at 60Hz today, but they are doing so in a manner that’s only useful for video. For HTPCs this ends up being a good compromise and as far as we can gather this is a clever move on NVIDIA’s part. But for anyone who is seeing the news of NVIDIA supporting 4K@60Hz over HDMI and hoping to use a TV as a desktop monitor, this will still come up short. Until the next generation of video cards and TVs hit the market with full HDMI 2.0 support (4:4:4 and/or RGB), DisplayPort 1.2 will remain the only way to transmit a full resolution 4K image.

54 Comments

View All Comments

dmytty - Friday, June 20, 2014 - link

I was surprised that there were no active display adapters shown at Computex '14 for DP 1.2 to HDMI 2.0 with support for 4k 60 fps. Did any 'journalists' look for these kinds of novel little things or were they too busy filing the press releases for another 28" LCD monitor? Seriously, coverage from IT websites has really been a letdown (and that includes this site).Regarding the active adapter, it seems that with a few hundred thousand tv sets and computer monitors being sold with HDMI 2.0, there would be a nice market for enabling PC connections to these monitors.

Me thinks there might be some underhanded dealings in Taiwan and Korea whereby the big display manufacturers (and the HDMI cartel) are restricting the use of TV's as computer monitors...and limiting refresh rate has been the way to do it.

Of course, we can also thank AMD and Nvidia for selling $1k+ GPU's which are handicapped by a little HDMI transmitter chip.

To those who wonder, I'm typing this on LG's 2014 55" 4k entry (55 UB8500 series). Desktop GTX 570 and laptop GT 650m in Asus NV56 both handle things well via DP 1.2 to HDMI 1.4 active dongle and straight HDMI output on laptop (both at 30 hz).

Sitting 40" from the screen, my eyes have never felt better. Yes, it's 30 hz, but I'm doing zero gaming. Lots of CAD work and web research. CAD productivity has improved (worked on a 30" monitor prior). Firefox, Autodesk Inventor 2014 and Onenote 2010 are great with massive screen real estate and the resulting <90 PPI (yet still 'Retina' at 40") means everything works pretty well via simple Windows 7 scaling at 150%.

Still gotta find me that adapter just to see the difference between 30 Hz and 60 Hz. To those who are hesitating though, please don't. Sitting close to a 4k screen is awesome.

Pork@III - Saturday, June 21, 2014 - link

Dammit! hdmi 2.0 it is already for use at 1 year. Nvidia play fucking games with consumers.OrphanageExplosion - Saturday, June 21, 2014 - link

Neat solution. 4:2:0 is around half the bandwidth of 4:4:4 and RGB24, so a good match for HDMI 1.4. Not a good idea for the desktop, but in a living room where you're situated some way off the screen, you won't notice the difference.crimsonson - Sunday, June 22, 2014 - link

You won't notice the difference because human vision is comparative. So unless you see the original video you will not be able to tell.Second, subsampling do the worse damage with video that is already degraded. It exacerbates noise, blockiness and other quality symptoms.

Hisma1 - Saturday, June 21, 2014 - link

Article title is slightly misleading. Maxwell-based cards (750 ti) also support this feature, not just kepler. I have confirmed it working with my UHD TV connected to an HTPC with a 750ti on board.Still testing, but so far so good. The display does appear "dimmer" and less vibrant though, which is a slight concern.

Nuno Simões - Saturday, June 21, 2014 - link

It's not implying that only Kepler will get this, it's informing that Kepler will get it.godrilla - Saturday, June 21, 2014 - link

Best 4k tvs are have about 3 times more input lag than sony's bravia full hd tvs. Gaming on a 4k TV is still far far away from perfect, which would be an hdmi 2.0 ( with new codec ) tv at 65 inches to appreciate those extra pixels and then there is the hardware to process all that is still way too expensive, I'm predicting another few years post maxwell to be closer to an ideal experience.Hawk269 - Saturday, June 21, 2014 - link

I have tested this as well and it does work. On desktop use, the first issue I noticed is that my mouse pointer, which is a white arrow with a black outline, the outline was not apparent. So on a white back round it was hard to see where the mouse was. However, upon booting up a game, it looked fantastic. 4k at 60fps was a real treat.I currently own a Sony 4k Set and a new Panasonic AX800 in my gaming room. The Panasonic has a Display Port and I am using 2 GTX Titan Blacks to get 4k/60fps in games. Comparing the image in a game (Elder Scrolls On-Line) they look almost identical. But when going to desktop, it is apparent that one using HDMI is not of the same quality as the Display Port which is sending a true 4:4:4 image to the screen.

I am going to test more and compare them some more. My Panasonic suffers from Banding issues, so I need to decide if I will exchange it or get a refund. I had last years Panasonic 4k due to them being the only ones including display port, but I went through 2 sets with really bad banding issues, so not sure I want to get another one now.

kalita - Sunday, July 13, 2014 - link

I have Samsung F9000 UHD TV (with updated connect box that supports HDMI 2.0)and a NVidia 750Ti connected to it. Once I updated to 343 beta driver, the 4k 60Hz resolution appeared. When I switch to it, both the TV and card report 60Hz. Unfortunately I can see that the display is still updated at 30Hz.I did some testing by writing a simple Direct3D program and figured out that only every other frame is displayed. Basically, the refresh rate is 60Hz but only every other Present call is visible on the TV. I don't know whether it's the card or the TV dropping half the frames.

Has anyone actually tried enabling this 4:2:0 mode and experienced smooth 60Hz in UHD?

Semore666 - Sunday, June 28, 2015 - link

Hey mate, I've got the same TV as you and a GTX 780 OC. I've connected it up with a few different HDMI cables including one from eBay claiming to be 2.0 and I'm only getting 30fps. I'm going crazy! whats the solution to get 60fps since there doesn't seem to be any display port to hdmi 2.0 and the TV doesn't take display port. Thanks in advance. I've currently got driver 353.30 - surely this would support it a year later...