The ASRock X99 Extreme11 Review: Eighteen SATA Ports with Haswell-E

by Ian Cutress on March 11, 2015 8:00 AM EST- Posted in

- Motherboards

- Storage

- ASRock

- X99

- LGA2011-3

Gaming Performance on GTX 770s

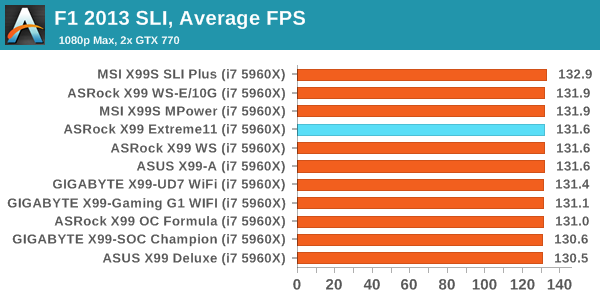

F1 2013

First up is F1 2013 by Codemasters. I am a big Formula 1 fan in my spare time, and nothing makes me happier than carving up the field in a Caterham, waving to the Red Bulls as I drive by (because I play on easy and take shortcuts). F1 2013 uses the EGO Engine, and like other Codemasters games ends up being very playable on old hardware quite easily. In order to beef up the benchmark a bit, we devised the following scenario for the benchmark mode: one lap of Spa-Francorchamps in the heavy wet, the benchmark follows Jenson Button in the McLaren who starts on the grid in 22nd place, with the field made up of 11 Williams cars, 5 Marussia and 5 Caterham in that order. This puts emphasis on the CPU to handle the AI in the wet, and allows for a good amount of overtaking during the automated benchmark. We test at 1920x1080 on Ultra graphical settings.

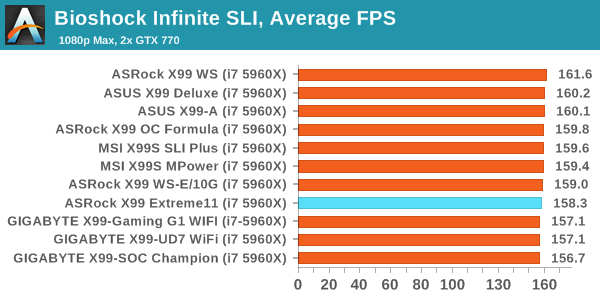

Bioshock Infinite

Bioshock Infinite was Zero Punctuation’s Game of the Year for 2013, uses the Unreal Engine 3, and is designed to scale with both cores and graphical prowess. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

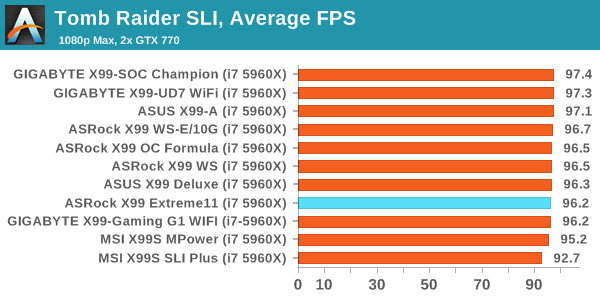

Tomb Raider

The next benchmark in our test is Tomb Raider. Tomb Raider is an AMD optimized game, lauded for its use of TressFX creating dynamic hair to increase the immersion in game. Tomb Raider uses a modified version of the Crystal Engine, and enjoys raw horsepower. We test the benchmark using the Adrenaline benchmark tool and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

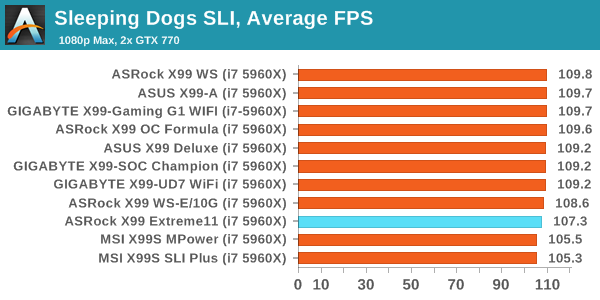

Sleeping Dogs

Sleeping Dogs is a benchmarking wet dream – a highly complex benchmark that can bring the toughest setup and high resolutions down into single figures. Having an extreme SSAO setting can do that, but at the right settings Sleeping Dogs is highly playable and enjoyable. We run the basic benchmark program laid out in the Adrenaline benchmark tool, and the Xtreme (1920x1080, Maximum) performance setting, noting down the average frame rates and the minimum frame rates.

58 Comments

View All Comments

Vorl - Wednesday, March 11, 2015 - link

ahh, like I said, I might have missed something. Thanks!I was just looking at the haswell family and know it does support IGP. I didn't know that 2011/-E doesn't

yuhong - Saturday, March 14, 2015 - link

Yea, servers are where 2D graphics on a separate chip on the motherboard is still common.Kevin G - Wednesday, March 11, 2015 - link

Native PCIe SSDs or 10G Ethernet controllers would make good use of the PCIe slots.A PCIe slot will be necessary for graphics, at lest during first time setup. Socket 2011-3 chips don't have integrated graphics so it is necessary. (It is possible to setup everything headless but you'll be glad you have a GPU if anything goes wrong.)

As for why use the LSI controller, it is a decent HBA for software RAID like those used under ZFS. For FreeNAS/NAS4Free users, the numerous number of ports enables some rather larger arrays or features like hot sparing or SSD caching.

Vorl - Wednesday, March 11, 2015 - link

for 10G Ethernet controllers/Fiber HBAs you only need (need is such a strong word too, considering 10g ethernet, and 8gb fiber only need 3 and 2 lanes respectively for PCIe 2.0.) 8x slots. for super fast PCIe storage like SSDs you only need 4x slots which is still 2GB/s for PCIe 2.0 They would have been better served adding more PCIe 8x slots, but then again, what would be the point of 18 SATA slots if you were going to add storage controllers in the PCIe 16x slots?The 4x16 PCIE x16 slots makes me think compute server, but that doesn't mesh with 18 SATA ports. If the database engines were able to use graphics cards now (which I know is being worked on) this system might make more sense.

It still makes me think they just tried to slap a bunch of stuff together without any real thought about what the system would really be used for. I am all for goign fishing and seeing what people would use a board like this for, except that the $600 price tag put's it out of anyone but the most specialized use cases.

As for the LSI controller, like someone mentioned above, you can get a cheaper board with 8x sata PCIe cards to give you the same number of ports. More ports even since most boards these days come with 6x sata 6Gbs connections The 1mb of cache is so silly for the LSI chip that it's laughable.

The 128mb of cache for the RAID controller is a little better, but again, with just 6 RAID ports, what's the point?

The whole board is just a mess of confusion.

3DoubleD - Wednesday, March 11, 2015 - link

Similar to my thinking in my post above.If you are going for a software RAID setup with a ludicrous number of SATA ports, you can get a Z97 board with 3 full PCIe slots (x8,x8,x4) with 8 SATA ports. With three supermicro cards (two 8x SATAIII and one 8x SATAII because of the x4 PCIe slot) you would have 32 SATA ports and it would cost you $650. The software raid I use "only" accepts up to 25 drives, so that last card is only necessary if you need that 1 extra drive, so for $500 you could run a 24 drive array with a M.2 or SATA Express SSD for a cache/system drive. And as you pointed out, since it is Z97, it would have on board video.

Basically, given the price of these non-RAID add-in SATA cards, I'd say that any manufacturer making a marketing play on SATA ports needs to keep the cost of each additional SATA port to <$20/port over the price of a board with similar PCIe slot configurations.

As you said, if this board had 18 SATA ports that could support hardware RAID, then it would be worth the additional price tag. This is probably not possible though since 10 SATA ports are from the chipset and the rest from an additional controller. For massive hardware RAID setups your better off getting a PCIe 2.0 x16 card (for 16 SATAIII drives) or a PCIe 3.0 x16 card (if such a thing even exists, it could theoretically handle 32 SATAIII drives). I'm sure such large hardware RAID arrays become overwhelming for the controller and would cost a fortune.

Anyway, this must be some niche prosumer application that requires ludicrous amounts of non-RAID storage and 4 co-processor slots. I can't imagine what it is though.

Runiteshark - Wednesday, March 11, 2015 - link

No clue why they didn't do a LSI 3108 and have the port for the add on BBU and cache unit like Supermicro does on some of their boards. Also not sure why these companies can't put 10g copper connectors at minimum on these boards. Again, supermicro does it without issue.DanNeely - Wednesday, March 11, 2015 - link

There're people who think combining their gaming godbox and blueray rip mega storage box into a single computer is a good idea. They're the potential market for a monstrosity like this.You know what they say, "A fool and his money will probably make someone else rich."

Murloc - Wednesday, March 11, 2015 - link

I guess this is aimed at the rather unlikely situation of someone wanting both storage and computation/gaming in the same place.You know, there are people out there who just want the best and don't care about wasting money on features they don't need.

Zak - Thursday, March 12, 2015 - link

I agree. For reasons Vorl mentioned this is a pointless board. I can't imagine a target market for this. My first reaction was also, wow, beastly storage server. But then yeah, different controllers. What is the point?eanazag - Thursday, March 12, 2015 - link

It is not a server board. Haswell-E desktop board. I have no use for that many SATA ports but someone might.2 x DVD or BD drives

2 x SSDs on RAID 1 for boot

Use Windows to mirror the two below RAID 0 volumes.

7 x SSDs in RAID 0

7 x SSDs in RAID 0

The mirrored RAID 0 volumes could get you about 3-6 GBps transfer rates on reads from a 400 MBps SSD in sequential read. Maybe a little less in write speeds. All done with mediocre SSDs.

This machine would cost over $2000.