The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync vs. G-SYNC Performance

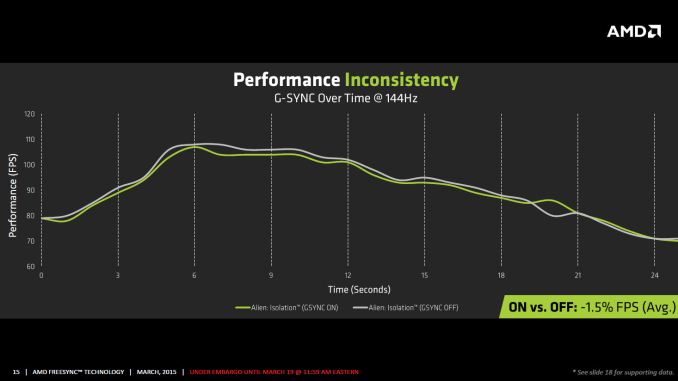

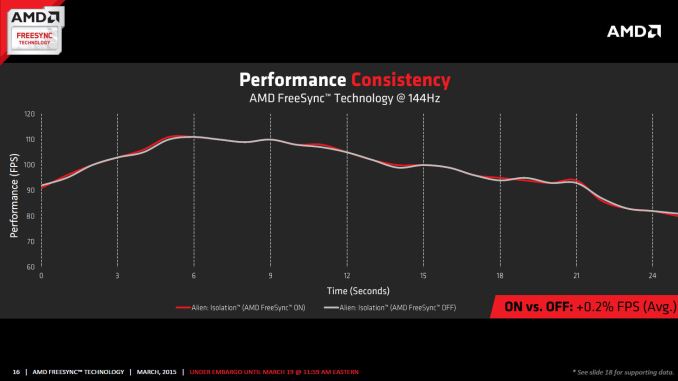

One item that piqued our interest during AMD’s presentation was a claim that there’s a performance hit with G-SYNC but none with FreeSync. NVIDIA has said as much in the past, though they also noted at the time that they were "working on eliminating the polling entirely" so things may have changed, but even so the difference was generally quite small – less than 3%, or basically not something you would notice without capturing frame rates. AMD did some testing however and presented the following two slides:

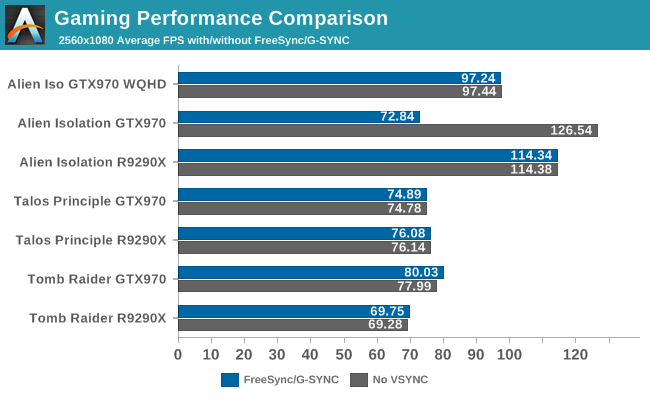

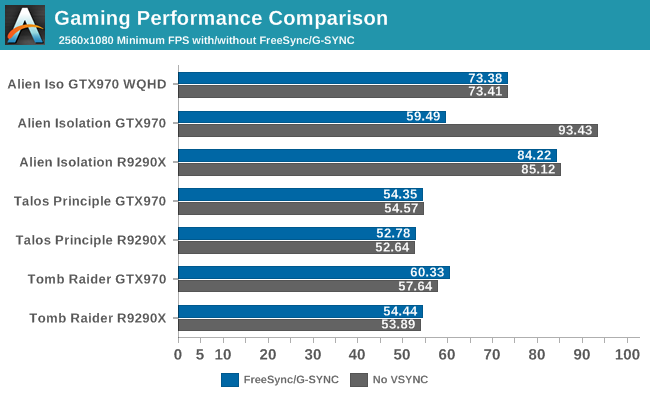

It’s probably safe to say that AMD is splitting hairs when they show a 1.5% performance drop in one specific scenario compared to a 0.2% performance gain, but we wanted to see if we could corroborate their findings. Having tested plenty of games, we already know that most games – even those with built-in benchmarks that tend to be very consistent – will have minor differences between benchmark runs. So we picked three games with deterministic benchmarks and ran with and without G-SYNC/FreeSync three times. The games we selected are Alien Isolation, The Talos Principle, and Tomb Raider. Here are the average and minimum frame rates from three runs:

Except for a glitch with testing Alien Isolation using a custom resolution, our results basically don’t show much of a difference between enabling/disabling G-SYNC/FreeSync – and that’s what we want to see. While NVIDIA showed a performance drop with Alien Isolation using G-SYNC, we weren’t able to reproduce that in our testing; in fact, we even showed a measurable 2.5% performance increase with G-SYNC and Tomb Raider. But again let’s be clear: 2.5% is not something you’ll notice in practice. FreeSync meanwhile shows results that are well within the margin of error.

What about that custom resolution problem on G-SYNC? We used the ASUS ROG Swift with the GTX 970, and we thought it might be useful to run the same resolution as the LG 34UM67 (2560x1080). Unfortunately, that didn’t work so well with Alien Isolation – the frame rates plummeted with G-SYNC enabled for some reason. Tomb Raider had a similar issue at first, but when we created additional custom resolutions with multiple refresh rates (60/85/100/120/144 Hz) the problem went away; we couldn't ever get Alien Isolation to run well with G-SYNC using our custome resolution, however. We’ve notified NVIDIA of the glitch, but note that when we tested Alien Isolation at the native WQHD setting the performance was virtually identical so this only seems to affect performance with custom resolutions and it is also game specific.

For those interested in a more detailed graph of the frame rates of the three runs (six total per game and setting, three with and three without G-SYNC/FreeSync), we’ve created a gallery of the frame rates over time. There’s so much overlap that mostly the top line is visible, but that just proves the point: there’s little difference other than the usual minor variations between benchmark runs. And in one of the games, Tomb Raider, even using the same settings shows a fair amount of variation between runs, though the average FPS is pretty consistent.

350 Comments

View All Comments

silverblue - Saturday, March 21, 2015 - link

I can certainly let you off most of those, but third party activities shouldn't count, so you can subtract 6 and 12. Additionally, 13 can be picked apart as the 295X2 showed that AMD can present a high quality cooler, and because I believe lumping the aesthetic qualities of a cooler in with heat and noise is a partial falsehood (admit it - you WILL have been thinking of metal versus plastic shrouds). I also don't agree with you on 11; at least, not if you move back past the 2XX generation as AMD had more aggressive bundles back then. 8 is subjective but NVIDIA usually gets the nod here.Also, some of your earlier items are proprietary tech, to which I could always tease you about as it's not as if they couldn't license any of this out. ;)

I'll hand it to you and credit you with your dozen.

chizow - Saturday, March 21, 2015 - link

And I thank you for not doing the typical dismissive approach of "Oh I don't care about those features" that some on these forums might respond with.I would still disagree on 6 and 12 though, ultimately they are still a part of Nvidia's ecosystem and end-user experience, and in many cases, Nvidia affords them the tools and support to enable and offer these value-add features. 3rd party tools for example, they specifically take advantage of Nvidia's NVAPI to access hardware features via driver and Nvidia's very transparent XML settings to manipulate AA/SLI profile data. Similarly, every feature EVGA offers to end users has to be worth their effort and backed by Nvidia to make business sense for them.

And 13, I would absolutely disagree on that one. I mean we see the culmination of Nvidia's cooling technology, the Titan NVTTM cooler, which is awesome. Having to resort to a triple slot water cooled solution for a high-end graphics card is terrible precedent imo and a huge barrier to entry for many, as you need additional case mounting and clearance which could be a problem if you already have a CPU CLC as many do. But that's just my opinion.

AMD did make a good effort with their Gaming Evolved bundles and certainly offered better than Nvidia for a brief period, but its pretty clear their marketing dollars dried up around the same time they cut that BF4 Mantle deal and their current financial situation hasn't allowed them to offer anything compelling since. But I stand by that bulletpoint, Nvidia typically offers the more relevant and attractive game bundle at any given time.

One last point in favor of Nvidia, is Optimus. I don't use it at home as I have no interest in "gaming" laptops, but it is a huge benefit there. We do have them on powerful laptops at work however, and the ability to "elevate" an application to the Nvidia dGPU on command is a huge benefit there as well.

anubis44 - Tuesday, March 24, 2015 - link

@chizow:But hey kids, remember, after reading this 16 point PowerPoint presentation where he points out the superiority of nVidia using detailed arguments like "G-Sync" and "GRID" as strengths, chizow DOES NOT WORK FOR nVidia! He is not sitting in the marketing department in Santa Clara, California, with a group of other marketing mandarins running around, grabbing factoids for him to type in as responses to chat forums. No way!

Repeat after me, 'chizow does NOT work for nVidia.' He's just an ordinary, everyday psychopath who spends 18 hours a day at keyboard responding to every single criticism of nVidia, no matter how trivial. But he does NOT work for nVidia! Perish the thought! He just does it out of his undying love for the green goblin.

chizow - Tuesday, March 24, 2015 - link

But hey remember AMD fantards, there's no reason that the overwhelming majority of the market prefers Nvidia, those 16 things I listed don't actually mean anything if you prefer subpar product and don't demand better, and you continually choose to ignore the obvious one product supports more features and the other doesn't. But hey, just keep accepting subpar products and listen to AMD fanboys like anubis44, don't give in to the reality the rest of us all accept as fact.sr1030nx - Saturday, March 21, 2015 - link

Only if they were NVIDIA branded speaker cables 😉Darkito - Friday, March 20, 2015 - link

FalseDarkito - Friday, March 20, 2015 - link

False, it's indistinguishable "Within the supported refresh rate range" as per this review. What happens outside the VRR window however, and especially under it, is incredibly different. With G-sync, if you get 20 fps it'll actually duplicate frames and tune the monitor to 40Hz, which means smooth gaming at sub-30Hz refresh rates (well, as smooth as 20fps can be). With FreeSync, it'll just fall back to v-sync on or off, with all the stuttering or tearing that involves. That means that if your game ever falls below the VRR window on FreeSync, image quality falls apart dramatically. And according to PCPer, this isn't just something AMD can fix with a driver update because it requires the frame buffer and logic on the G-Sync module!http://www.pcper.com/reviews/Displays/AMD-FreeSync...

Take note that the LG panel tested actually has a VRR window lower bound of 48Hz, so image quality starts falling apart if you dip below 48fps, which is clearly unacceptable.

AdamW0611 - Sunday, March 22, 2015 - link

Yah just like R.I.P Direct X, Mantle will rule the day, now AMD is telling developers to ignore Mantle, Gsync is great, and for those of us who prefer drivers being updated the same day games are released will stick with Nvidia, while months later AMD users will be crying that games still don't work right for them.anubis44 - Tuesday, March 24, 2015 - link

Company of Heroes 2 worked like shit for nVidia users for months after release, while my Radeon 7950 was pulling more FPS than a Titan card. To this day, Radeons pull better, and smoother FPS than equivalently priced nVidia cards in this, my favourite game. The GTX970 is still behind the R9 290 today. Is that the 'same day' nVidia driver support you're referring to?chizow - Tuesday, March 24, 2015 - link

More BS from one of the biggest AMD fanboys on the planet, an AMD *CPU* fanboy nonetheless. CoH2 ran faster on Nvidia hardware from Day1, and also runs much faster on Intel CPUs, so yeah, as usual, you're running the slower hardware in your favorite game simply bc you're a huge AMD fanboy.http://www.techspot.com/review/689-company-of-hero...

http://www.anandtech.com/show/8526/nvidia-geforce-...