OCZ Announces First SATA Host Managed SSD: Saber 1000 HMS

by Billy Tallis on October 15, 2015 8:00 AM EST- Posted in

- SSDs

- OCZ

- Enterprise SSDs

- Barefoot 3

Today OCZ is introducing the first SATA drive featuring a technology that may be the next big thing for enterprise SSDs. Referred to by OCZ as "Host Managed SSD" technology (HMS) and known elsewhere in the industry by the buzzword "Storage Intelligence", the general idea is to let the host computer know more about what's going on inside the SSD and to have more influence over how the SSD controller goes about its business.

Standardization efforts have been underway for more than a year in the committees for SAS, SATA, and NVMe, but OCZ's implementation is a pre-standard design that may not be compatible with what is eventually ratified. To provide some degree of forwards compatibility, OCZ is releasing an open-source abstraction library to provide what they hope will be a hardware-agnostic interface that can be used with future HMS devices.

OCZ's HMS implementation provides a vendor-specific extension of the ATA command set. A mode switch is required to access HMS features; when HMS mode is off the drive behaves like a normal non-HMS SATA drive and all background processing like garbage collection are managed autonomously by the SSD. When the HMS features are enabled, the host computer can request that the drive override normal operating procedure and disable all background processes, or to perform them as a high-priority task. If background processing is left disabled for too long, the drive will re-enable the background processing when needed and suffer the immediate performance penalty of the emergency garbage collection.

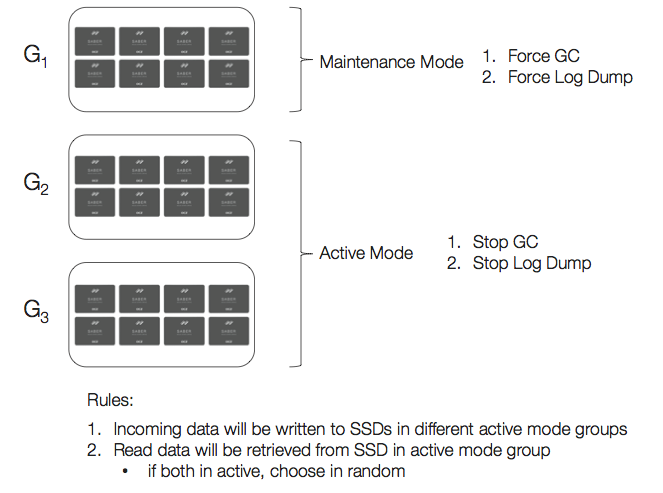

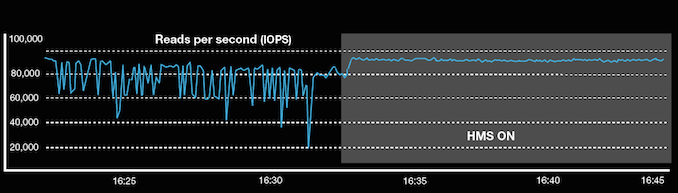

The intention is to allow better aggregate performance from an array of drives. An example OCZ gives is of an array divided into three pools of drives. At any given time, two pools are actively receiving writes, while the drives in the third pool are focusing solely on the "background" housekeeping operations. The two pools that are in active use defer all the background processing and operate with peak performance and consistency. By cycling the drive pools through the two modes, the intention is that none of the active drives will ever reach the steady-state of having constant background processing to free up space for the incoming writes. This provides a big improvement to performance consistency, and can also provide a minor improvement to overall throughput of the array.

Obviously, the load balancing and coordination required by such a scheme is not part of any traditional RAID setup. OCZ expects early adopters of HMS technology to make use of it from application layer code. HMS does not require any new operating system drivers, and OCZ will be providing tools and reference code to facilitate using HMS. They plan to eventually expand this to a comprehensive SDK, but for now everybody is in the position of having to explore how to best make use of HMS for their specific use case. For some customers, that may mean load balancing several pools of SSDs attached to a single server, while others may find it easier to temporarily offline an entire server for housekeeping.

OCZ has also envisioned that future HMS products may expand the controls from managing background processing to also changing overprovisioning or power limits on the fly, but they have no specific timeline for those features.

The drive OCZ is introducing with HMS technology is a variant of their existing Saber 1000 enterprise SATA SSD. The Saber 1000 HMS differs only in the SSD controller firmware; otherwise it is still a low-cost drive using the Barefoot 3 controller and is intended primarily for read-oriented workloads. Pricing is the same with or without HMS capability, though the Saber 1000 HMS is only offered in the 480GB and 960GB capacities. The warranty in either case is limited to 5 years, but because the HMS controls can affect write amplification, the Saber HMS write endurance rating is based on the actual Program/Erase cycle count of the drive rather than the total amount of data written to the drive.

| OCZ Saber 1000 HMS | ||

| Capacity | 480GB | 960GB |

| 4kB Random Read IOPS | 90k | 91k |

| 4kB Random Write IOPS | 22k | 16k |

| Random Read Latency | 135µs | 135µs |

| Random Write Latency | 55µs | 55µs |

| Sequential Read | 550 MB/s | 550 MB/s |

| Sequential Write | 475 MB/s | 445 MB.s |

| MSRP | $370 | $713 |

As a read-oriented drive with relatively little overprovisioning, the Saber 1000 has a lot to gain from HMS in terms of write performance and consistency, and it may allow the Saber 1000 HMS to compete in areas the Saber 1000 isn't fast enough for.

In addition to control over the garbage collection process, the Saber 1000 HMS provides a similar set of controls for managing when the controller saves metadata from its RAM to the flash. This is the information the controller uses to keep track of where each piece of data is physically stored and which blocks are free to accept new writes. Every write to the disk adds to the metadata log, so the changes need to be periodically evicted from RAM to flash. This is one of the key data structures that the drive's power loss protection needs to preserve, so the size of the in-RAM metadata log may also be limited by the drive's capacitor budget.

To enable software to make effective use of these controls, the Saber 1000 HMS provides an unprecedented view in to the inner workings of the drive. Software can query the drive for the NAND page size, erase block size, number of blocks per bank and number of banks in the drive. The total program and erase counts are reported separately, and information about free blocks is reported as the total across the drive as well as the average, maximum, and minimum per bank. The drive also provides a status summary of whether garbage collection or metadata log dumping are active, and whether they are needed. OCZ's reference guide provides recommendations for interpreting all of these indicators.

The Saber 1000 HMS will be available in early November for bulk purchases. The technical documentation and reference code should be available online today.

Source: OCZ

17 Comments

View All Comments

mgl888 - Thursday, October 15, 2015 - link

There are certainly merits to hiding the underlying structure of an SSD. I don't think programmers want to deal with errors/reliability details. The tricky part is to find the right amount of details to expose to gain that extra performance.MrSpadge - Thursday, October 15, 2015 - link

Yep, hiding complexity from "everyone" and letting the professionals (SSD manufacturer) deal with it is good. As long as it doesn't hurt that the SSD doesn't know anything about the outside world (i.e. what's the future workload going to be that the OS / user are planning?).ZeDestructor - Friday, October 16, 2015 - link

For the average webdev schmuck, sure. For the OS-level guys writing disk schedulers and filesystems, raw access would be real nice.Take CFS for example, it's been tuned over a very long time to suit HDD's large seek times, andin it's internal queue, it reorganizes file order access so that the disk can access the blocks using as few sweeps as possible. On an SSD, this sort of stuff would potentially let the OS be able to balance block writes and page erases much, much more effectively than a lower-level controller by virtue of knowing the amount of pending data that needs to be written, their block sizes and how many blocks may need to be freed. In fact, one of the really fun avenues would be page erasing multiple NAND channels while writing to the other NAND channels.

sor - Friday, October 16, 2015 - link

But you're ignoring that the controller providing an LBA abstraction and standard interface is actually a good thing. Otherwise you're basically saying that every SSD needs its own driver. You either have the logic in the SSD firmware or in a driver.It sounds like what you want is basically NAND DIMMs or similar. It's an interesting concept, but there are various reasons why this wasn't done. The main reason is probably compatibility; can you imagine if SSD vendors came up with a standard and just dumped flash DIMMs into the market, asking CPU manufacturers to develop interfaces and OS vendors to each write their own flash drivers?

The general purpose CPUs we have these days really aren't great for making real-time, low latency decisions like this. You probably don't want uninterruptible SSD controller threads blocking your CPU while you're doing work. Moving toward this model where the SSD controller is basically a coprocessor that can have the real-time work offloaded to it and the CPU has enhanced controls over the coprocessor is probably the best of both worlds anyway.

Gigaplex - Thursday, October 15, 2015 - link

Call me too when that happens. So I know when it's time to hide in a bunker until the fallout fades away.abufrejoval - Saturday, October 24, 2015 - link

Then you get a FusionIO drive: Never tried looking the the driver source code and I guess documentation isn't really publicly available, but that's how they do it.Admittedly very much a Steve Wozniak approach, like the floppy disk code in the Apple ][, even if he only joined the company much later.

abufrejoval - Saturday, October 24, 2015 - link

To me this is very much aimed at web-scale type operations, Open Compute users and such likes.But your mention of RAID got me thinking that even enterprise customers make like the looks of these, once they are partially hidden behind a RAID controller managing the individual SSDs. So if you think SSD appliance built out of SSD form factor flash with custom controller code, this might hit a nice niche.

But you'd still have to use massive amounts of these to get your return on investing into the management code and then would you trust anyone without tons of verification?

Sure can't see this in the enterprise nor in the consumer space (except with the fallback mode).

Still, I like the direction this is taking...