A Look At Triple-GPU Performance And Multi-GPU Scaling, Part 1

by Ryan Smith on April 3, 2011 7:00 AM ESTIt’s been quite a while since we’ve looked at triple-GPU CrossFire and SLI performance – or for that matter looking at GPU scaling in-depth. While NVIDIA in particular likes to promote multi-GPU configurations as a price-practical upgrade path, such configurations are still almost always the domain of the high-end gamer. At $700 we have the recently launched GeForce GTX 590 and Radeon HD 6990, dual-GPU cards whose existence is hedged on how well games will scale across multiple GPUs. Beyond that we move into the truly exotic: triple-GPU configurations using three single-GPU cards, and quad-GPU configurations using a pair of the aforementioned dual-GPU cards. If you have the money, NVIDIA and AMD will gladly sell you upwards of $1500 in video cards to maximize your gaming performance.

These days multi-GPU scaling is a given – at least to some extent. Below the price of a single high-end card our recommendation is always going to be to get a bigger card before you get more cards, as multi-GPU scaling is rarely perfect and with equally cutting-edge games there’s often a lag between a game’s release and when a driver profile is released to enable multi-GPU scaling. Once we’re looking at the Radeon HD 6900 series or GF110-based GeForce GTX 500 series though, going faster is no longer an option, and thus we have to look at going wider.

Today we’re going to be looking at the state of GPU scaling for dual-GPU and triple-GPU configurations. While we accept that multi-GPU scaling will rarely (if ever) hit 100%, just how much performance are you getting out of that 2nd or 3rd GPU versus how much money you’ve put into it? That’s the question we’re going to try to answer today.

From the perspective of a GPU review, we find ourselves in an interesting situation in the high-end market right now. AMD and NVIDIA just finished their major pushes for this high-end generation, but the CPU market is not in sync. In January Intel launched their next-generation Sandy Bridge architecture, but unlike the past launches of Nehalem and Conroe, the high-end market has been initially passed over. For $330 we can get a Core i7 2600K and crank it up to 4GHz or more, but what we get to pair it with is lacking.

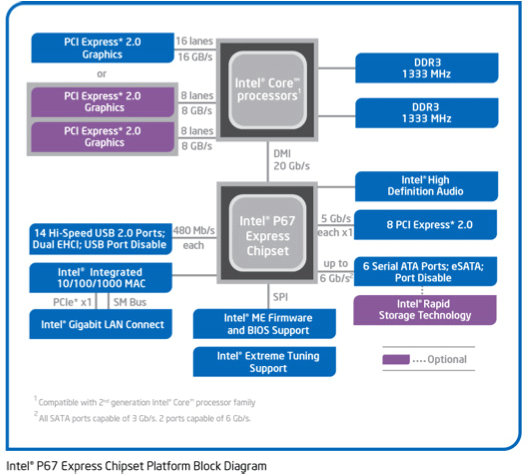

Sandy Bridge only supports a single PCIe x16 link coming from the CPU – an awesome CPU is being held back by a limited amount of off-chip connectivity; DMI and a single PCIe x16 link. For two GPUs we can split that out to x8 and x8 which shouldn’t be too bad. But what about three GPUs? With PCIe bridges we can mitigate the issue some by allowing the GPUs to talk to each other at x16 speeds and dynamically allocate CPU-to-GPU bandwidth based on need, but at the end of the day we’re splitting a single x16 lane across three GPUs.

The alternative is to take a step back and work with Nehalem and the x58 chipset. Here we have 32 PCIe lanes to work with, doubling the amount of CPU-to-GPU bandwidth, but the tradeoff is the CPU. Gulftown and Nehalm are capable chips on its own, but per-clock the Nehalem architecture is normally slower than Sandy Bridge, and neither chip can clock quite as high on average. Gulftown does offer more cores – 6 versus 4 – but very few games are held back by the number of cores. Instead the ideal configuration is to maximize performance of a few cores.

Later this year Sandy Bridge E will correct this by offering a Sandy Bridge platform with more memory channels, more PCIe lanes, and more cores; the best of both worlds. Until then it comes down to choosing from one of two platforms: a faster CPU or more PCIe bandwidth. For dual-GPU configurations this should be an easy choice, but for triple-GPU configurations it’s not quite as clear cut. For now we’re going to be looking at the latter by testing on our trusty Nehalem + x58 testbed, which largely eliminates a bandwidth bottleneck in a tradeoff for a CPU bottleneck.

Moving on, today we’ll be looking at multi-GPU performance under dual-GPU and triple-GPU configurations; quad-GPU will have to wait. Normally we only have two reference-style cards of any product on hand, so we’d like to thank Zotac and PowerColor for providing a reference-style GTX 580 and Radeon HD 6970 respectively.

97 Comments

View All Comments

taltamir - Sunday, April 3, 2011 - link

wouldn't it make more sense to use a Radeon 6970 + 6990 together to get triple GPU?nVidia triple GPU seems to lower min FPS, that is just fail.

Finally: Where are the eyefinity tests? none of the results were relevant since all are over 60fps with dual SLI.

Triple monitor+ would be actually interesting to see

semo - Sunday, April 3, 2011 - link

Ryan mentions in the conclusion that a triple monitor setup article is coming.ATI seems to be the clear winner here but the conclusion seems to downplay this fact. Also, the X58 platform isn't the only one that has more than 16 PCIe lanes...

gentlearc - Sunday, April 3, 2011 - link

If you're considering going triple-gpu, I don't see how scaling matters other than an FYI. There isn't a performance comparison, just more performance. You're not going to realistically sell both your 580s and go and get three 6970s. I'd really like if you look at lower end cards capable of triple-gpu and their merit. The 5770 crossfired was a great way of extending the life of one 5770. Two 260s was another sound choice by enthusiasts looking for a low price tag upgrade.So, the question I would like answered is if triple gpu is a viable option for extending the life of your currently compatible mobo. Can going triple gpus extend the life of your i7 920 as a competent gaming machine until a complete upgrade makes more sense?

SNB-E will be the cpu upgrade path, but will be available around the time the next generation of gpus are out. Is picking up a 2nd and/or 3rd gpu going to be a worthy upgrade or is the loss of selling three gpus to buy the next gen cards too much?

medi01 - Sunday, April 3, 2011 - link

Besides, 350$ GPU is compared to 500$ GPU. Or so it was last time I've checked on froogle (and that was today, 3d of April 2011)A5 - Sunday, April 3, 2011 - link

AT's editorial stance has always been that SLI/XFire is not an upgrade path, just an extra option at the high end, and doubly so for Tri-fire and 3x SLI.I'd think buying a 3rd 5770 would not be a particularly wise purchase unless you absolutely didn't have the budget to get 1 or 2 higher-end cards.

Mr Alpha - Sunday, April 3, 2011 - link

I use RadeonPro to setup per application crossfire settings. While it is a bummer it doesn't ship with AMD's drivers, per application settings is not an insurmountable obstacle for AMD users.BrightCandle - Sunday, April 3, 2011 - link

I found this program recently and it has been a huge help. While Crysis 2 has flickering lights (don't get me started on this games bugs!) using Radeon Pro I could fix the CF profile and play happily, without shouting at ATI to fix their CF profiles, again.Pirks - Sunday, April 3, 2011 - link

I noticed that you guys never employ useful distributed computing/GPU computing tests in your GPU reviews. You tend to employ some useless GPU computing benchmarks like some weird raytracers or something, I mean stuff people would not normally use. But you never employ really useful tests like say distributed.net's GPU computation clients, AKA dnetc. Those dnetc clients exist in AMD Stream and nVidia CUDA versions (check out http://www.distributed.net/Download_clients - see, they have CUDA 2.2, CUDA 3.1 and Stream versions too) and I thought you'd be using them in your benchmarks, but you don't, why?Also check out their GPU speed database at http://n1cgi.distributed.net/speed/query.php?cputy...

So why don't you guys use this kind of benchmark in your future GPU computing speed tests instead of useless raytracer? OK if you think AT readers really bother with raytracers why don't you just add these dnetc GPU clients to your GPU computing benchmark suite?

What do you think Ryan? Or is it someone else doing GPU computing tests in your labs? Is it Jarred maybe?

I can help with setting up those tests but I don't know who to talk to among AT editors

Thanks for reading my rant :)

P.S. dnetc GPU client scales 100% _always_, like when you get three GPUs in your machine your keyrate in RC5-72 is _exactly_ 300% of your single GPU, I tested this setup myself once at work, so just FYI...

Arnulf - Sunday, April 3, 2011 - link

"P.S. dnetc GPU client scales 100% _always_, like when you get three GPUs in your machine your keyrate in RC5-72 is _exactly_ 300% of your single GPU, I tested this setup myself once at work, so just FYI... "So you are essentially arguing running dnetc tests make no sense since they scale perfectly proportionally with the number of GPUs ?

Pirks - Sunday, April 3, 2011 - link

No, I mean the general GPU reviews here, not this particular one about scaling