The NVIDIA GeForce GTX 780 Ti Review

by Ryan Smith on November 7, 2013 9:01 AM EST

When NVIDIA launched their first consumer GK110 card back in February with the GeForce GTX Titan, one of the interesting (if frustrating) aspects of the launch was that we knew we wouldn’t be getting a “complete” GK110 card right away. GTX Titan was already chart-topping fast, easily clinching the crown for NVIDIA, but at the same time it was achieving those high marks with only 14 of GK110’s 15 SMXes active. The 15th SMX, though representing just 7% of GK110’s compute/geometry hardware, offered the promise of just a bit more performance out of GK110, and a promise that would have to wait to be fulfilled another day. For a number of reasons, NVIDIA would keep a little more performance in the tank in reserve for use in the future.

Jumping forward 8 months to the past few weeks, and things have significantly changed in the high-end video card market. With the launch of AMD’s new flagship video card, Radeon R9 290X, AMD has unveiled the means to once again compete with NVIDIA at the high end. And at the same time they have shown that they have the wherewithal to get into a fantastic, bloody price war for control of the high-end market. Right out of the gate 290X was fast enough to defeat GTX 780 and battle GTX Titan to a standstill, at a price hundreds of dollars cheaper than NVIDIA’s flagship card. The outcome of this has been price drops all around, with GTX 780 shedding $150, GTX Titan being all but relegated to the professional side of “prosumer,” and an unexpectedly powerful Radeon R9 290 practically starting the same process all over again just 2 weeks later.

With that in mind, NVIDIA has long become accustomed to controlling the high-end market and the single-GPU performance crown. AMD and NVIDIA may go back and forth at times, but at the end of the day it’s usually NVIDIA who comes out on top. So with AMD knocking at their door and eyeing what has been their crown, the time has come for NVIDIA to tap their reserve tank and to once again cement their hold. The time has come for GTX 780 Ti.

| GTX 780 Ti | GTX Titan | GTX 780 | GTX 770 | |

| Stream Processors | 2880 | 2688 | 2304 | 1536 |

| Texture Units | 240 | 224 | 192 | 128 |

| ROPs | 48 | 48 | 48 | 32 |

| Core Clock | 875MHz | 837MHz | 863MHz | 1046MHz |

| Boost Clock | 928Mhz | 876Mhz | 900Mhz | 1085MHz |

| Memory Clock | 7GHz GDDR5 | 6GHz GDDR5 | 6GHz GDDR5 | 7GHz GDDR5 |

| Memory Bus Width | 384-bit | 384-bit | 384-bit | 256-bit |

| VRAM | 3GB | 6GB | 3GB | 2GB |

| FP64 | 1/24 FP32 | 1/3 FP32 | 1/24 FP32 | 1/24 FP32 |

| TDP | 250W | 250W | 250W | 230W |

| Transistor Count | 7.1B | 7.1B | 7.1B | 3.5B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 28nm |

| Launch Date | 11/07/13 | 02/21/13 | 05/23/13 | 05/30/13 |

| Launch Price | $699 | $999 | $649 | $399 |

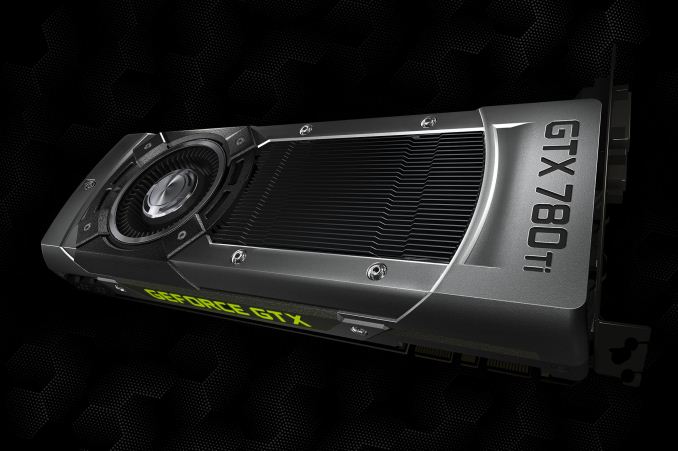

Getting right down to business, GeForce GTX 780 Ti unabashedly a response to AMD’s Radeon R9 290X while also serving as NVIDIA’s capstone product for the GeForce 700 series. With NVIDIA finally ready and willing to release fully enabled GK110 based cards – a process that started with Quadro K6000 – GTX 780 Ti is the obligatory and eventual GeForce part to bring that fully enabled GK110 GPU to the consumer market. By tapping the 15th and final SMX for a bit more performance and coupling it with a very slight clockspeed bump, NVIDIA has the means to fend off AMD’s recent advance while offering a refresh of their product line just in time for a busy holiday season and counter to the impending next-generation console launch.

Looking at the specifications for GTX 780 Ti in detail, at the hardware level GTX 780 Ti is the fully enabled GK110 GeForce part we’ve long been waiting for. Featuring all 15 SMXes up and running, GTX 780 Ti features 25% more compute/geometry/texturing hardware than the GTX 780 it essentially replaces, or around 7% more hardware than the increasingly orphaned GTX Titan. To that end the only place that GTX 780 Ti doesn’t improve on GTX Titan/780 is in the ROP department, as both of those cards already featured all 48 ROPs active, alongside the associated memory controllers and L2 cache.

Coupled with the fully enabled GK110 GPU, NVIDIA has given GTX 780 Ti a minor GPU clockspeed bump to make it not only the fastest GK110 card overall, but also the highest clocked card. The 875MHz core clock and 928MHz boost clock is only 15MHz and 28MHz faster than GTX 780 in their respective areas, but with GTX 780 already being clocked higher than GTX Titan, GTX 780 Ti doesn’t need much more in the way of GPU clockspeed to keep ahead of the competition and its older siblings. As a result if we’re comparing GTX 780 Ti to GTX 780, then GTX 780 relies largely on its SMX advantage to improve performance, combining a 1% clockspeed bump and a 25% increase in shader harder to offer 27% better shading/texturing/geometry performance and just 1% better ROP throughput than GTX 780. Or to compare things to Titan, then GTX 780 Ti relies on its more significant 5% clockspeed advantage coupled with its 7% functional unit increase to offer a 12% increase in shading/texturing/geometry performance, alongside a 5% increase in ROP throughput.

With specs and numbers in mind, there is one other trick up GTX 780 Ti’s sleeve to help push it past everything else, and that is a higher 7GHz memory clock. NVIDIA has given GK110 the 7GHz GDDR5 treatment with the GTX 780 Ti (making it the second card after GTX 770 to get this treatment), giving GTX 780 Ti 336GB/sec of memory bandwidth. This is 17% more than either GTX Titan or GTX 780, and even edging out the recently released Radeon R9 290X’s 320GB/sec. The additional memory bandwidth, though probably not absolutely necessary from what we’ve seen with GTX Titan, will help NVIDIA get as much out of GK110 as they can and further separate the card from other NVIDIA and AMD cards alike.

The only unfortunate news here when it comes to memory will be that unlike Titan, NVIDIA is sticking with 3GB for the default RAM amount on GTX 780 Ti. Though the performance ramifications of this will be minimal (at least at this time), will put the card in an odd spot of having less RAM than the cheaper Radeon R9 290 series.

Taken altogether then, GTX 780 Ti stands to be anywhere between 1% and 27% faster than GTX 780 depending on whether we’re looking at a ROP-bound or shader-bound scenario. Otherwise it stands to be between 5% and 17% faster than GTX Titan depending on whether we’re ROP-bound or memory bandwidth-bound.

Meanwhile let’s quickly talk about power consumption. As GTX 780 Ti is essentially just a spec bump of the GK110 hardware we’ve seen for the last 8 months, power consumption won’t officially be changing. NVIDIA designed GTX Titan and GTX 780 with the same power delivery system and the same TDP limit, with GTX 780 Ti further implementing the same system and the same limits. So officially GTX 780 Ti’s TDP stands at 250W just like the other GK110 cards. Though in practice power consumption for GTX 780 Ti will be higher than either of those other cards, as the additional performance it affords will mean that GTX 780 Ti will be on average closer to that 250W limit than either of those cards.

Finally, let’s talk about pricing, availability, and competitive positioning. On a pure performance basis NVIDIA expects GTX 780 Ti to be the fastest single-GPU video card on the market, and our numbers back them up on this. Consequently NVIDIA is going to be pricing and positioning GTX 780 Ti a lot like GTX Titan/780 before it, which is to say that it’s going to be priced as a flagship card rather than a competitive card. Realistically AMD can’t significantly threaten GTX 780 Ti, and although it’s not going to be quite the lead that NVIDIA enjoyed over AMD earlier this year, it’s enough of a lead that NVIDIA can pretty much price GTX 780 Ti based solely on the fact that it’s the fastest thing out there. And that’s exactly what NVIDIA has done.

To that end GTX 780 Ti will be launching at $699, $300 less than GTX Titan but $50 higher than the original GTX 780’s launch price. By current prices this will put it $150 over the R9 290X or $200 over the reprised GTX 780, a significant step over each. GTX 780 Ti will have the performance to justify its positioning, but just as the previous GK110 cards it’s going to be an expensive product. Meanwhile GTX Titan will be remaining at $999, despite the fact that it’s now officially dethroned as the fastest GeForce card (GTX 780 having already made it largely redundant). At this point it will live on as NVIDIA’s entry level professional compute card, keeping its unique FP64 performance advantage over the other GeForce cards.

Elsewhere on a competitive basis, until such a time where factory overclocked 290X cards hit the market, the only real single-card competition for GTX 780 Ti will be the Radeon HD 7990, AMD’s Tahiti based dual-GPU card, which these days retails for close to $800. Otherwise the closest competition will be dual card setups, such as the GTX 770 SLI, R9 280X CF, and R9 290 CF. All of those should present formidable challenges on a pure performance basis, though it will bring with it the usual drawbacks of multi-GPU rendering.

Meanwhile, as an added perk NVIDIA will be extending their recently announced “The Way It’s Meant to Be Played Holiday Bundle with SHIELD” promotion to the GTX 780 Ti, which consists of Assassins’ Creed IV, Batman: Arkham Origins, Splinter Cell: Blacklist, and a $100 SHIELD discount. NVIDIA has been inconsistent about this in the past, so it’s a nice change to see it included with their top card. As always, the value of bundles are ultimately up to the buyer, but for those who do place value in the bundle it should offset some of the sting of the $699 price tag.

Finally, for launch availability this will be a hard launch. Reference cards should be available by the time this article goes live, or shortly thereafter. It is a reference launch, and while custom cards are in the works NVIDIA is telling us they likely won’t hit the shelves until December.

| Fall 2013 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| $700 | GeForce GTX 780 Ti | ||||

| Radeon R9 290X | $550 | ||||

| $500 | GeForce GTX 780 | ||||

| Radeon R9 290 | $400 | ||||

| $330 | GeForce GTX 770 | ||||

| Radeon R9 280X | $300 | ||||

| $250 | GeForce GTX 760 | ||||

| Radeon R9 270X | $200 | ||||

| $180 | GeForce GTX 660 | ||||

| $150 | GeForce GTX 650 Ti Boost | ||||

| Radeon R7 260X | $140 | ||||

302 Comments

View All Comments

yuko - Monday, November 11, 2013 - link

for me neither of them is gamechanger ... gsync, shield ... nice stuff i don't needmantle: another nice approach to create an semi-closed-standard .. it's not that directX or opengl is allready existing and working quite good, no , we need another low level standard where amd creates the api (and to be honest, they would be quite stupid not optimizing it for their hardware).

I cannot believe and hope that mantle will flop, it does no favor to customers and the industry. It's just good for the marketing but has no real world use.

Kamus - Thursday, November 7, 2013 - link

Nope, it's confirmed for every frostbite 3 game coming out, that's at least a dozen so far, not to mention it's also officially coming to starcitizen, which runs on cryengine 3 I believe.But yes, even with those titles it's still a huge difference, obviously.

That said, you can expect that any engine optimized for GCN on consoles could wind up with mantle support, since the hard work is already done. And in the case of star citizen... Well, that's a PC exclusive, and it's still getting mantle.

StevoLincolnite - Thursday, November 7, 2013 - link

Mantle is confirmed for all Frostbite powered games.That is, Battlefield 4, Dragon Age 3, Mirrors Edge 2, Need for Speed, Mass Effect, StarWars Battlefront, Plant's vs Zombies: Garden Warfare and probably others that haven't been announced yet by EA.

Star Citizen and Thief will also support Mantle.

So that's EA, Cloud Imperium Games, Square Enix that will support the API and it hasn't even released yet.

ahlan - Thursday, November 7, 2013 - link

And for Gsync you will need a new monitor with Gsync support. I won't buy a new monitor only for that.jnad32 - Thursday, November 7, 2013 - link

http://ir.amd.com/phoenix.zhtml?c=74093&p=irol...BOOM!

Creig - Friday, November 8, 2013 - link

Gsync will only work on Kepler and above video cards.So if you have an older card, not only do you have to buy an expensive gsync capable monitor, you also need a new Kepler based video card as well. Even if you already own a Kepler video card, you still have to purchase a new gsync monitor which will cost you $100 more than an identical non-gsync monitor.

Whereas Mantle is a free performance boost for all GCN video cards.

Summary:

Gsync cost - Purchase new computer monitor +$100 for gsync module.

Mantle cost - Free performance increase for all GCN equipped video cards.

Pretty easy to see which one offers the better value.

neils58 - Sunday, November 10, 2013 - link

As you say Mantle is very exciting, but we don't know how much performance we are talking about yet. My thinking on saying that crossfire was AMD's only answer is that in order to avoid the stuttering effect of dropping below the Vsync rate, you have to ensure that the minimum framerate is much higher, which means adding more cards or turning down quality settings. If Mantle turns out to be a huge performance increase things might work out, but we just don't know.Sure, TN isn't ideal, but people with gaming priorities will already be looking for monitors with low input lag, fast refresh rates and features like backlight strobing for motion blur reduction, G-Sync will basically become a standard feature on a brands lineup of gaming oriented monitors. I think it'll come down in price a fair bit too once there are a few competing brands.

It's all made things tricky for me, I'm currently on a 1920x1200 'VA monitor on a 5850 and was considering going up to a 1440p 27" screen (which would have required a new GPU purchase anyway) G-Sync adds enough value to Gaming TN's to push me over to them.

jcollett - Monday, November 11, 2013 - link

I've got a large 27" IPS panel so I understand the concern. However, a good high refresh panel need not cost very much and still look great. Check out the ASUS VG248QE; been hearing good things about the panel and it is relatively cheap at about $270. I assume it would work with the G-Sync but I haven't confirmed that myself. I'll be looking for reviews of Battlefield 4 using Mantle this December as that could makeup a big part of the decision on my next card coming from Team Green or Red.misfit410 - Thursday, November 7, 2013 - link

I don't buy that it's a game changer, I have no intention of replacing my three Dell Ultrasharp monitors anytime soon, and even if I did I have no intention of dealing with buggy displayport as my only option to hook up a synced monitor.Mr Majestyk - Thursday, November 7, 2013 - link

+1I've got two high end Dell 27" monitors and it's a joke to think I'd swap them out for garbage TN monitors just to get G Sync.

I don't see the 780 Ti as being any skin off AMD's nose. It's much dearer for very small gains and we haven't seen the custom AMD boards yet. For now I'd probably get the R9 290, assuming custom boards can greatly improve on cooling and heat.