Micron M500DC (480GB & 800GB) Review

by Kristian Vättö on April 22, 2014 2:35 PM EST

While the client SSD space has become rather uninteresting lately, the same cannot be said of the enterprise segment. The problem in the client market is that most of the modern SSDs are already fast enough for the vast majority and hence price has become the key, if not the only, factor when buying an SSD. There is a higher-end market for enthusiasts and professionals where features and performance are more important, but the mainstream market is constantly taking a larger and larger share of that.

The enterprise market, on the other hand, is totally different. Unlike in the client world, there is no general "Facebook-email-Office" workload that can easily be traced and the drives can be optimized for that. Another point is that enterprises, especially larger ones, are usually well aware of their IO workloads, but the workloads are nearly always unique in one way or the other. Hence the enterprise SSD market is heavily segmented as one drive doesn't usually fit all workloads: one workload may require a drive that does 100K IOPS in 4KB random write consistently with endurance of dozens of petabytes, while another workload may be fine with a drive that provides enough 4KB random read performance to be able to replace several hard drives. Case in point, this is what Micron's enterprise SSD lineup looks like:

| Comparison of Micron's Enterprise SSDs | |||||

| M500DC | P400m | P400e | P320h | P420m | |

| Form Factor | 2.5" SATA | 1.8" ; .2.5" SATA, mSATA | 2.5" PCIe, HHHL PCIe | ||

| Capacities (GB) | 120, 240, 480, 800 | 100, 200, 400 | 50, 100, 200, 400 | 175, 350, 700 | 350, 700, 1400 |

| Controller | Marvell 9187 | Marvell 9174 | IDT 32-channel PCIe 2.0 x8 | ||

| NAND | 20nm MLC | 25nm MLC | 25nm MLC | 34nm SLC | 25nm MLC |

| Sequential Read (MB/s) | 425 | 380 | 440 / 430 | 3200 | 3300 |

| Sequential Write (MB/s) | 200 / 330 / 375 | 200 / 310 | 100 / 160 / 240 | 1900 | 600 / 630 |

| 4KB Random Read (IOPS) | 63K / 65K | 52K / 54K / 60K | 59K / 44K / 47K | 785K | 750K |

| 4KB Random Write (IOPS) | 23K / 33K / 35K / 24K | 21K / 26K | 7.3K / 8.9K / 9.5K / 11.3K | 205K | 50K / 95K |

| Endurance (PB) | 0.5 / 1.0 / 1.9 | 1.75 / 3.5 / 7.0 | 0.0875 / 0.175 | 25 / 50 |

5 / 10 |

In order to fit the table on this page, I even had to leave out a few models, specifically the P300, P410m, and P322h. With today's release of the M500DC, Micron has a total of eight different active SSDs in its enterprise portfolio while its client portfolio only has two.

Micron's enterprise lineup has always been two-headed: there are entry to mid-level SATA/SAS products, which are followed by the high-end PCIe drives. The M500DC represents Micron's new entry-level SATA drive and as the naming suggests, it's derived from the client M500. The M500 and M500DC share the same controller (Marvell 9187) and NAND (128Gbit 20nm MLC) but otherwise the M500DC has been designed from ground up to fit the enterprise requirements.

| Micron M500DC Specifications (Full, Steady-State) | ||||

| Capacity | 120GB | 240GB | 480GB | 800GB |

| Controller | Marvell 88SS9187 | |||

| NAND | Micron 128Gbit 20nm MLC | |||

| DRAM | 256MB | 512MB | 1GB | 1GB |

| Sequential Read | 425MB/s | 425MB/s | 425MB/s | 425MB/s |

| Sequential Write | 200MB/s | 330MB/s | 375MB/s | 375MB/s |

| 4KB Random Read | 63K IOPS | 63K IOPS | 63K IOPS | 65K IOPS |

| 4KB Random Write | 23K IOPS | 33K IOPS | 35K IOPS | 24K IOPS |

| Endurance (TBW) | 0.5PB | 1.0PB | 1.9PB | 1.9PB |

The M500DC is aimed at data centers that require affordable solid-state storage, such content streaming, cloud storage, and big data analytics. These are typically hyperscale enterprises and due to their exponentially growing storage needs, the storage has to be relatively cheap or otherwise the company may not have the capital to keep up with the growth. In addition, most of these data centers are more read heavy (think about Netflix for instance) and hence there is no need for high-end PCIe drives with endurance in the order of dozens of petabytes.

In terms of NAND the M500DC features the same 128Gbit 20nm MLC NAND as its client counterpart. This isn't even a high-endurance or enterprise specific part -- it's the same 3,000 P/E cycle part you find inside the normal M500. Micron did say that the parts going inside the M500DC are more carefully picked to meet the requirements but at a high-level we are dealing with consumer-grade MLC (or cMLC).

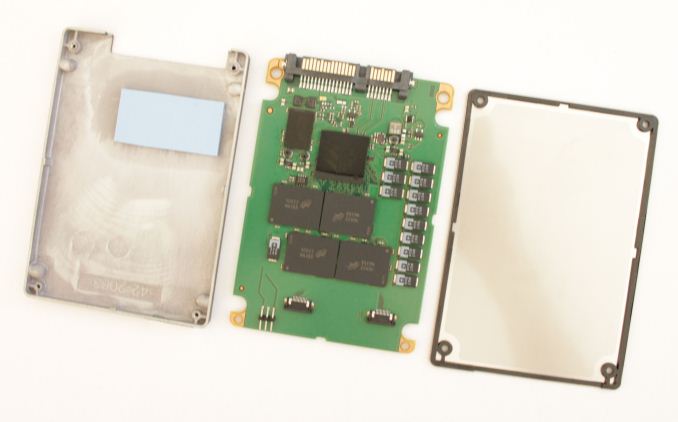

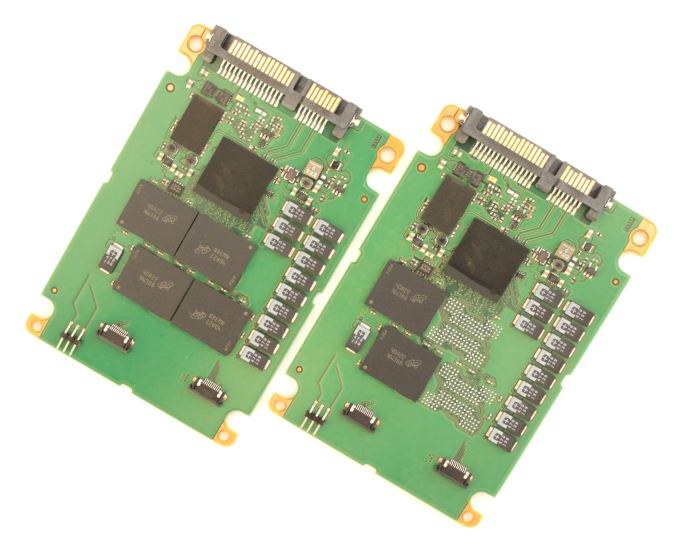

To get away with cMLC in the enterprise space, Micron sets aside an enormous portion of the NAND for over-provisioning. The 480GB model features a total of six NAND packages, each consisting of eight 128Gbit dies for a total NAND capacity of 768GiB. In other words, only 58% of the NAND ends up being user-accessible. Of course not all of that is over-provisioning as Micron's NAND redundancy technology, RAIN, dedicates a portion of the NAND for parity data, but the M500DC still has more over-provisioning than a standard enterprise drive. The only exception is the 800GB model which has 1024GiB of NAND onboard with 73% of that being accessible by the user.

| User Capacity | 120GB | 240GB | 480GB | 800GB |

| Total NAND Capacity | 192GiB | 384GiB | 768GiB | 1024GiB |

| RAIN Stripe Ratio | 11:1 | 11:1 | 11:1 | 15:1 |

| Effective Over-Provisioning | 33.5% | 33.5% | 33.5% | 21.0% |

A quick explanation for the numbers above. To calculate the effective over-provisioning, the space taken by RAIN must be taken into account first because RAIN operates at the page/block/die level (i.e. parity is not only generated for the user data but all data in the drive). A stripe ratio of 11:1 basically means that every twelfth bit is a parity bit and thus there are eleven data bits in every twelve bits. In other words, out of 192GiB of raw NAND only 176GiB is usable by the controller to store data. Out of that 120GB (~112GiB) is accessible by the user, which leaves 64GiB for over-provisioning. Divide that by the total NAND capacity (192GiB) and you should get the same 33.5% figure for effective over-provisioning as I did.

| Test Setup | |

| CPU | Intel Core i7-4770K at 3.5GHz (Turbo & EIST enabled, C-states disabled) |

| Motherboard | ASUS Z87 Deluxe (BIOS 1707) |

| Chipset | Intel Z87 |

| Chipset Drivers | 9.4.0.1026 |

| Storage Drivers | Intel RST 12.9.0.1001 |

| Memory | Corsair Vengeance DDR3-1866 2x8GB (9-10-9-27 2T) |

| Graphics | Intel HD Graphics 4600 |

| Graphics Drivers | 15.33.8.64.3345 |

| Power Supply | Corsair RM750 |

| OS | Windows 7 Ultimate 64-bit |

Before we get into the actual tests, we would like to thank the following companies for helping us with our 2014 SSD testbed.

- Thanks to Intel for the Core i7-4770K CPU

- Thanks to ASUS for the Z87 Deluxe motherboard

- Thanks to Corsair for the Vengeance 16GB DDR3-1866 DRAM kit, RM750 power supply, Hydro H60 CPU cooler and Carbide 330R case

37 Comments

View All Comments

abufrejoval - Monday, April 28, 2014 - link

I'm seen an opportunity here to clarify something that I've always wondered about:How exactly does this long time retention work for FLASH?

In the old days, when you had an SSD, you weren't very likely having it lie around, after you paid an arm and a leg for it.

These days, however, storing your most valuable data on an SSD almost seems logical, because one of my nightmares is dropping that very last backup magnetic drive, just when I'm trying to insert it after a complete loss of my primary active copy: SSD just seems so much more reliable!

And then there comes this retention figure...

So what happens when I re-insert an SSD, that has been lying around say for 9 months with those most valuable baby pics of your grown up children?

Does just powering it up mean all those flash cells with logical 1's in them will magically draw in charge like some sort of electron sponge?

Or will the drive have to go through a complete read-check/overwrite cycle depending on how near blocks have come to the electron depletion limit?

How would it know the time delta? How would I know it's finished the refresh and it's safe to put it away for another 9 months?

I have some older FusionIO 320GB MLC drives in the cupboard, that haven't been powered up for more than a year: Can I expect them to look blank?

P.S. Yes, you need an edit button and a resizable box for text entry!

Kristian Vättö - Tuesday, April 29, 2014 - link

The way NAND flash works is that electrons are injected to what is called a floating gate, which is insulated from the other parts of the transistor. As it is insulated, the electrons can't escape the floating gate and thus SSDs are able to hold the data. However, as the SSD is written to, the insulating layer will wear out, which decreases its ability to insulate the floating gate (i.e. make sure the electrons don't escape). That causes the decrease in data retention time.Figuring out the exact data retention time isn't really possible. At the maximum endurance, it should be 1 year for client drives and 3 months for enterprise drives but anything before and after is subject to several variables that the end-user don't have access to.

Solid State Brain - Tuesday, April 29, 2014 - link

Data retention depends mainly on NAND wear. It's the highest (several years - I've read 10+ years even for TLC memory though) at 0 P/E cycles and decreases with usage. By JEDEC specifications, consumer SSDs are to be considered at "end life" when the minimum retention time drops below 1 year, and that's what you should expect when reaching the P/E "limit" (which is not actually a hard limit, just a threshold based on those JEDEC-spec requirements). For enterprise drives it's 3 months. Storage temperature will also affect retention. If you store your drives in a cool place when unpowered, their retention time will be longer. By JEDEC specifications the 1 year time for consumer drives is at 30C, while the 3 months time for enterprise one is at 40C. Tidbit: manufacturers use to bake NAND memory in low temperature ovens to simulate high wear usage scenarios during tests.To be refreshed, data has to be reprogrammed again. Just powering up an SSD is not going to reset the retention time for the existing data, it's only going to make it temporarily slow down.

When powered, the SSD's internal controller keeps track of when writes occurred and reprograms old blocks as needed to make sure that data retention is maintained and consistent across all data. This is part of the wear leveling process, which usually is pretty efficient in keeping block usage consistent. However, I speculate this can happen only to a certain extent/rate. A worn drive left unpowered for a long time should preferably have its data dumped somewhere and then cloned back, to be sure that all NAND blocks have been refreshed and that their retention time has been reset to what their wear status allow.

hojnikb - Wednesday, April 23, 2014 - link

TLC is far from crap (well quality one that is). And no, TLC does not have issues holding a "charge". Jedec states a minimum of 1 year of data retention, so your statement is complete bullshit.apudapus - Wednesday, April 23, 2014 - link

TLC does have issues but the issues can be mitigated. A drive made up of TLC NAND requires much stronger ECC compared to MLC and SLC.Notmyusualid - Tuesday, April 22, 2014 - link

My SLC X25-E 64GB is still chugging along, with not so much as a hiccup.It n e v e r slows down, it 'felt' fast constantly, not matter what is going on.

In about that time I've had one failed OCZ 128GB disk (early Indullix I think), one failed Kingston V100, one failed Corsair 100GB too (model forgotten), a 160GB X25-M arrived DOA (but it's replacement is still going strong in a workstation), and late last year a failed Patriot Wildfire 240GB.

The two 840 Evo 250GB disks I have (TLC) are absolute garbage. So bad I had to remove them from the RAID0, and run them individually. When you want to over-write all the free space - you'd better have some time on your hands.

SLC for the win.

Solid State Brain - Wednesday, April 23, 2014 - link

The X25-E 64 GB actually has 80 GiB of NAND memory on its PCB. Since of these only 64 GB (-> 59.6 GiB) are available to the user, it means that about 25% of it is overprovisining area. The drive is obviously going to excel in performance consistency (at least for its time).On the other hand, the 840 250 GB EVO has less OP than the previous 840 models with TLC memory, as you have to subtract 9 GiB from the 23.17 GiB amount of unavailable space (256 GiB of physically installed NAND - 250 GB->232.83 GiB of user space) previously fully used as overprovisioning area, for the Turbowrite feature. This means that in trim-less or intensive write environments with little or no free space they're not going to be that great in performance consistency.

If you were going to use The Samsung 840 EVOs in a RAID-0 configuration you should really had at the very least to increase the OP area by setting up trimmed, unallocated space. So, it's not really that they are "absolute garbage" (as they obviously they aren't) and it's really inherently due to the TLC memory. It's your fault in that you most likely didn't take the necessary steps to use them properly with your RAID configuration.

Solid State Brain - Wednesday, April 23, 2014 - link

I meant:*...and it's NOT really inherently due to the...

TheWrongChristian - Friday, April 25, 2014 - link

> When you want to over-write all the free space - you'd better have some time on your hands.Why would you overwrite all the free space? Can't you TRIM the drives?

Any why run them in RAID0? Can't you use them as JBOD, and combine volumes?

SLC versus TLC results in a about a factor of 4 cheaper just based on a die area basis. That's why drives are MLC and TLC based, the extra storage being used to add extra spare area to make the drive more economical over the drives useful life. Your SLC x25-e, on the other hand, will probably never ever reach it's P/E limit before you discard it for a more useful, faster, bigger replacement drive. We'll probably have practical memrister based drives before the x25-e uses all it's P/E cycles.

zodiacsoulmate - Tuesday, April 22, 2014 - link

It make me think about my OCZ vector 256GB, it breaks everytime there is power lose, even hard reset...There are quite a lot people claim this problem online, and Vector 256GB became only sale refurbised before any other vector drive....

I RMAed two of them, and OCZ replaced mine with Vector 150, which seems fine now.. maybe we should add power lost test to SSDs...