Intel SSD DC P3700 Review: The PCIe SSD Transition Begins with NVMe

by Anand Lal Shimpi on June 3, 2014 2:00 AM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD DC P3700

- NVMe

In 2008 Intel introduced its first SSD, the X25-M, and with it Intel ushered in a new era of primary storage based on non-volatile memory. Intel may have been there at the beginning, but it missed out on most of the evolution that followed. It wasn't until late 2012, four years later, that Intel showed up with another major controller innovation. The Intel SSD DC S3700 added a focus on IO consistency, which had a remarkable impact on both enterprise and consumer workloads. Once again Intel found itself at the forefront of innovation in the SSD space, only to let others catch up in the coming years. Now, roughly two years later, Intel is back again with another significant evolution of its solid state storage architecture.

Nearly all prior Intel drives, as well as drives of its most qualified competitors have played within the confines of the SATA interface. Designed for and limited by the hard drives that came before it, SSDs used SATA to sneak in and take over the high performance market, but they did so out of necessity, not preference. The SATA interface and the hard drive form factors that went along with it were the sheep's clothing to the SSD's wolf. It became clear early on that a higher bandwidth interface was necessary to really give SSDs room to grow.

We saw a quick transition from 3Gbps to 6Gbps SATA for SSDs, but rather than move to 12Gbps SATA only to saturate it a year later most SSD makers set their eyes on PCIe. With PCIe 3.0 x16 already capable of delivering 128Gbps of bandwidth, it's clear this was the appropriate IO interface for SSDs. Many SSD vendors saw the writing on the wall initially, but their PCIe based SSD solutions typically leveraged a bunch of SATA SSD controllers behind a PCIe RAID controller. Only a select few PCIe SSD makers developed their own native controllers. Micron was among the first to really push a native PCIe solution with its P320h and P420m drives.

Bandwidth limitations were only one reason to want to ditch SATA. The other bit of legacy that needed shedding was AHCI, the interface protocol for communication between host machines and their SATA HBAs (Host Bus Adaptors). AHCI was designed for a world where low latency NAND based SSDs didn't exist. It ends up being a fine protocol for communicating with high latency mechanical disks, but one that consumes an inordinate amount of CPU cycles for high performance SSDs.

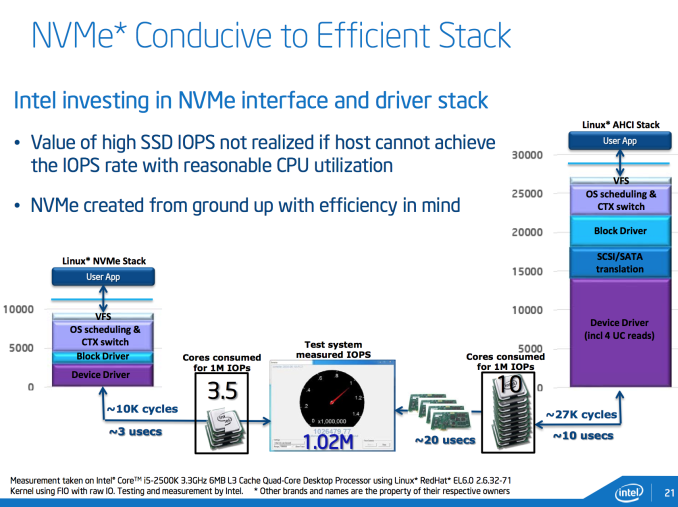

In the example above, the Linux AHCI stack alone requires around 27,000 cycles. The result is you need 10 Sandy Bridge CPU cores to drive 1 million IOPS. The solution is a new lightweight, low latency interface - one designed around SSDs and not hard drives. The result is NVMe (Non-Volatile Memory Express otherwise known as NVM Host Controller Interface Specification - NVMHCI). And in the same example, total NVMe overhead is reduced to 10,000 cycles, or roughly 3.5 Sandy Bridge cores needed to drive 1 million IOPS.

NVMe drives do require updated OS/driver support. Windows 8.1 and Server 2012R2 both include NVMe support out of the box, older OSes require the use of a miniport driver to enable NVMe support. Booting to NVMe drives shouldn't be an issue either.

NVMe is a standard that seems to have industry support behind it. Samsung already launched its own NVMe drives, SandForce announced NVMe support with its SF3700 and today Intel is announcing a family of NVMe SSDs.

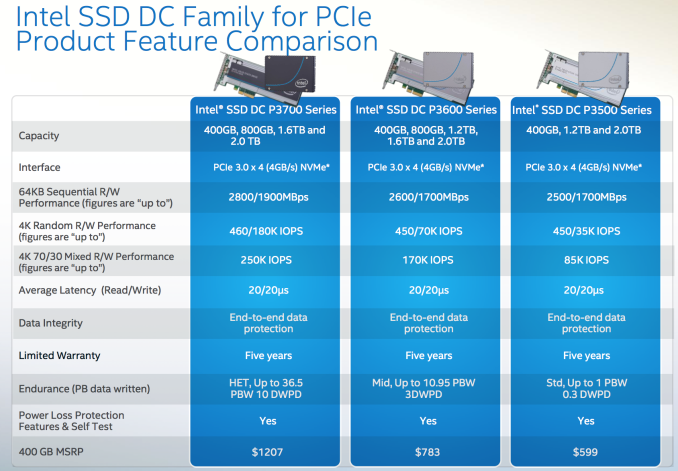

The Intel SSD DC P3700, P3600 and P3500 are all PCIe SSDs that feature a custom Intel NVMe controller. The controller is an evolution of the design used in the S3700/S3500, with improved internal bandwidth via an expanded 18-channel design, reduced internal latencies and NVMe support built in. The controller connects to as much as 2TB of Intel's own 20nm MLC NAND. The three different drives offer varying endurance and performance needs:

The pricing is insanely competitive for brand new technology. The highest endurance P3700 drive is priced at around $3/GB, which is similar to what enthusiasts were paying for their SSDs not too long ago. The P3600 trades some performance and endurance for $1.95/GB, and the P3500 drops pricing down to $1.495/GB. The P3700 ships with Intel's highest endurance NAND and highest over provisioning percentage (25% spare area vs. 12% on the P3600 and 7% on the P3500). DRAM capacities range from 512MB to 2.5GB of DDR3L on-board. All drives will be available in half-height, half-length PCIe 3.0 x4 add in cards or 2.5" SFF-8639 drives.

Intel sent us a 1.6TB DC P3700 for review. Based on Intel's 400GB drive pricing from the table above, the drive we're reviewing should retail for $4828.

IO Consistency

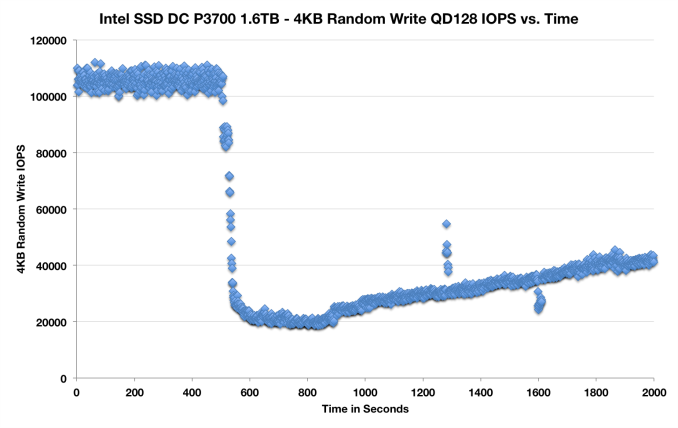

A cornerstone of Intel's DC S3700 architecture was its IO consistency. As the P3700 leverages the same basic controller architecture as the S3700, I'd expect a similar IO consistency story. I ran a slightly modified version of our IO consistency test, but the results should still give us some insight into the P3700's behavior from a consistency standpoint:

IO consistency seems pretty solid, the IOs are definitely not as tightly grouped as we've seen elsewhere. The P3700 still appears to be reasonably consistent and it does attempt to increase performance over time.

85 Comments

View All Comments

will792 - Tuesday, June 3, 2014 - link

How do you hardware RAID these drives?With SATA/SAS drives I can use LSI/Adaptec controllers and mirror/striping/parity configuration to tune performance, reliability and drive failure recoverability.

iwod - Wednesday, June 4, 2014 - link

While NVMe only uses a third of the CPU power, it is still quite lot to achieve those IOPS. Although consumer application would / should hardly see those number in use in real life.We really need PCI-E to get faster and more lanes, the Ultra M.2 promoted by ASRock was great. Direct CPU connect, 4X PCI-E 3.0. Lots and Lots of headroom to work with. Compared to upcoming going to be standard which would easily get saturated buy the time they arrive.

juhatus - Wednesday, June 4, 2014 - link

You should really really explore how you make this bootable win8.1 drive on Z97. Is it possible or not? With M.2 support on Z97 it really should'nt be a problem?Mick Turner - Wednesday, June 4, 2014 - link

Was there any hint of a release date?7Enigma - Wednesday, June 4, 2014 - link

Why is the S3700 200GB drive being used as the comparison to this gigantic 1.6TB monster? Unless there is something I don't understand it has always been the case where the larger the drive (and more channels used) can significantly increase the performance compared to a smaller drive (with less channels). The S3700 had an 800GB drive. That one IMO would be more representative of the improvements of the P3700.shodanshok - Wednesday, June 4, 2014 - link

Hi Anand,I have some question regarding the I/O efficiency graphs in the "CPU utilization" page.

What performance counter did you watch when comparing CPU storage load?

I'm ask you because if you use the classical "I/O wait time" (common on Unix and Windows platform), you are basically measuring the time the CPU is waiting for storage, *not* its load.

The point it that while the CPU is waiting for storage, it can schedule another readily-available thread. In other words, while it wait for storage, the CPU is free to do other works. If this is the case, it means that you are measuring I/O performance, *not* I/O efficiency (IOPS per CPU load).

On the other hand, If you are measuring system time and IRQ time, the CPU load graphs are correct.

Regards.

Ramon Zarat - Wednesday, June 4, 2014 - link

NET NEUTRALITYPlease, share this video: https://www.youtube.com/watch?v=fpbOEoRrHyU

I wrote an e-mail to the FCC, called them and left a message and went on their website to fill my comment. Took me 5 insignificant minutes. Do it too! Don't let those motherfuckers run over you! SHARE THIS VIDEO!!!!

Submit your comments here http://apps.fcc.gov/ecfs/upload/begin?procName=14-... It's proceeding # 14-28

#FUCKTHEFCC #netneutrality

underseaglider - Wednesday, June 4, 2014 - link

Technological advancements improve the reliability and performance of the tools and processes we all use in our daily routines. Whether for professional or personal needs, technology allows us to perform our tasks more efficiently in most cases.aperson2437 - Thursday, June 5, 2014 - link

Sounds like once these SSDs get cheap it is going to eliminate the aggravation of waiting for computers to do certain things like loading big programs and games forever. I can't wait to get my hands on one. I'm super impatient when it comes to computers. Hopefully, there will be some intense competition for these NVMe SSDs from Samsung and others and prices come down fast.Shiitaki - Thursday, June 5, 2014 - link

No, it was not out of necessity. SSD's have used Sata because they lacked vision/ lazy, or whatever other excuse. PCI express has been around for years, as so has AHCI. There is no reason there isn't a single strap on a PCI express card to change between operating modes, like AHCI for older machines, and whatever this new thing is. All an SSD is largely a risk computer that overwhelmingly provides it's functionality using software. Msata should have never existed, if you have to have a controller anyway, why not a PCI-express? After all, SATA controllers connect to PCI-express?SSD's could have been PCI express in 2008. Those early drives however were terrible, and didn't need the bandwidth or latency, so there was no reason. They were too busy trying to get NAND flash working to bother worrying about other concerns.

Even now, most flash drives being sold are not capable of saturating Sata3 even on sequential reads. I'm going to jab Kingston again here about their dishonest V300, but Micron's M500 isn't pushing any limits either. Intel SSD's should be fast, this isn't news, they have been horribly overpriced. What is news is that the price is now justified.

Why isn't the new spec internal thunderbolt? Oh yeah, has gots to make money on licensing! Why make money producing products when it is so much easier to cash royalty checks? The last thing the pc industry needs is another standard to do something that can already be done 2 other ways, but then we need a jobs program making adapters. Those two ways are PCI-Express, and thunderbolt.

At some point the hard drive should be replaced by a PCI-express full length card that accepts NAND cards, and the user simply buys and keeps adding cards as space is required. This can already be done with current technology, no reinventing the wheel required.