Intel SSD DC P3700 Review: The PCIe SSD Transition Begins with NVMe

by Anand Lal Shimpi on June 3, 2014 2:00 AM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD DC P3700

- NVMe

In 2008 Intel introduced its first SSD, the X25-M, and with it Intel ushered in a new era of primary storage based on non-volatile memory. Intel may have been there at the beginning, but it missed out on most of the evolution that followed. It wasn't until late 2012, four years later, that Intel showed up with another major controller innovation. The Intel SSD DC S3700 added a focus on IO consistency, which had a remarkable impact on both enterprise and consumer workloads. Once again Intel found itself at the forefront of innovation in the SSD space, only to let others catch up in the coming years. Now, roughly two years later, Intel is back again with another significant evolution of its solid state storage architecture.

Nearly all prior Intel drives, as well as drives of its most qualified competitors have played within the confines of the SATA interface. Designed for and limited by the hard drives that came before it, SSDs used SATA to sneak in and take over the high performance market, but they did so out of necessity, not preference. The SATA interface and the hard drive form factors that went along with it were the sheep's clothing to the SSD's wolf. It became clear early on that a higher bandwidth interface was necessary to really give SSDs room to grow.

We saw a quick transition from 3Gbps to 6Gbps SATA for SSDs, but rather than move to 12Gbps SATA only to saturate it a year later most SSD makers set their eyes on PCIe. With PCIe 3.0 x16 already capable of delivering 128Gbps of bandwidth, it's clear this was the appropriate IO interface for SSDs. Many SSD vendors saw the writing on the wall initially, but their PCIe based SSD solutions typically leveraged a bunch of SATA SSD controllers behind a PCIe RAID controller. Only a select few PCIe SSD makers developed their own native controllers. Micron was among the first to really push a native PCIe solution with its P320h and P420m drives.

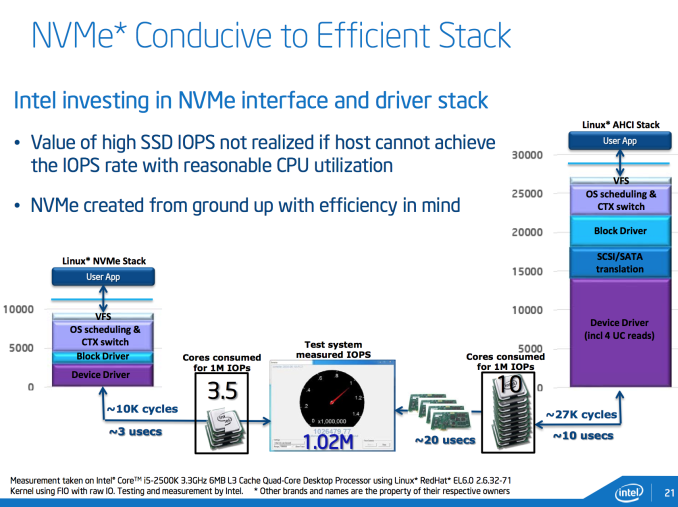

Bandwidth limitations were only one reason to want to ditch SATA. The other bit of legacy that needed shedding was AHCI, the interface protocol for communication between host machines and their SATA HBAs (Host Bus Adaptors). AHCI was designed for a world where low latency NAND based SSDs didn't exist. It ends up being a fine protocol for communicating with high latency mechanical disks, but one that consumes an inordinate amount of CPU cycles for high performance SSDs.

In the example above, the Linux AHCI stack alone requires around 27,000 cycles. The result is you need 10 Sandy Bridge CPU cores to drive 1 million IOPS. The solution is a new lightweight, low latency interface - one designed around SSDs and not hard drives. The result is NVMe (Non-Volatile Memory Express otherwise known as NVM Host Controller Interface Specification - NVMHCI). And in the same example, total NVMe overhead is reduced to 10,000 cycles, or roughly 3.5 Sandy Bridge cores needed to drive 1 million IOPS.

NVMe drives do require updated OS/driver support. Windows 8.1 and Server 2012R2 both include NVMe support out of the box, older OSes require the use of a miniport driver to enable NVMe support. Booting to NVMe drives shouldn't be an issue either.

NVMe is a standard that seems to have industry support behind it. Samsung already launched its own NVMe drives, SandForce announced NVMe support with its SF3700 and today Intel is announcing a family of NVMe SSDs.

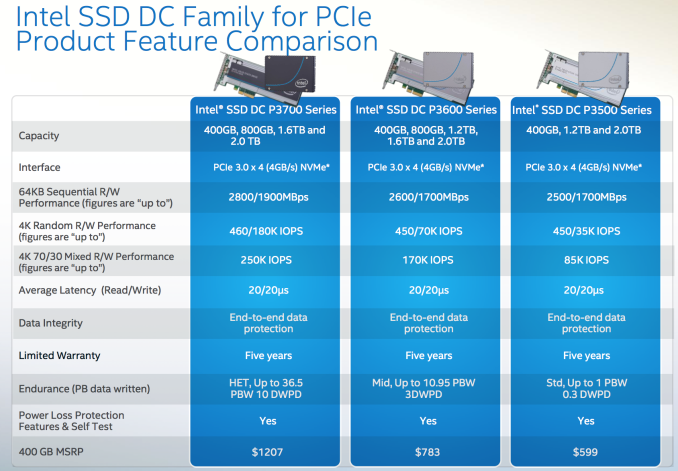

The Intel SSD DC P3700, P3600 and P3500 are all PCIe SSDs that feature a custom Intel NVMe controller. The controller is an evolution of the design used in the S3700/S3500, with improved internal bandwidth via an expanded 18-channel design, reduced internal latencies and NVMe support built in. The controller connects to as much as 2TB of Intel's own 20nm MLC NAND. The three different drives offer varying endurance and performance needs:

The pricing is insanely competitive for brand new technology. The highest endurance P3700 drive is priced at around $3/GB, which is similar to what enthusiasts were paying for their SSDs not too long ago. The P3600 trades some performance and endurance for $1.95/GB, and the P3500 drops pricing down to $1.495/GB. The P3700 ships with Intel's highest endurance NAND and highest over provisioning percentage (25% spare area vs. 12% on the P3600 and 7% on the P3500). DRAM capacities range from 512MB to 2.5GB of DDR3L on-board. All drives will be available in half-height, half-length PCIe 3.0 x4 add in cards or 2.5" SFF-8639 drives.

Intel sent us a 1.6TB DC P3700 for review. Based on Intel's 400GB drive pricing from the table above, the drive we're reviewing should retail for $4828.

IO Consistency

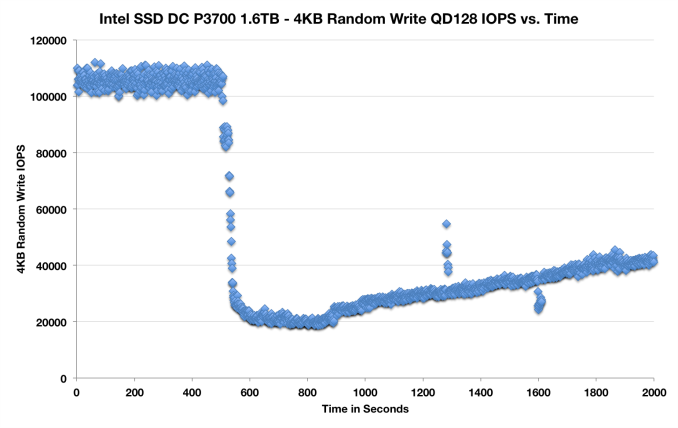

A cornerstone of Intel's DC S3700 architecture was its IO consistency. As the P3700 leverages the same basic controller architecture as the S3700, I'd expect a similar IO consistency story. I ran a slightly modified version of our IO consistency test, but the results should still give us some insight into the P3700's behavior from a consistency standpoint:

IO consistency seems pretty solid, the IOs are definitely not as tightly grouped as we've seen elsewhere. The P3700 still appears to be reasonably consistent and it does attempt to increase performance over time.

85 Comments

View All Comments

jamescox - Monday, June 9, 2014 - link

"Why isn't the new spec internal thunderbolt?"Thunderbolt is PCI-e x4 multiplexed with display port. Intel's new SSD is PCI-e x4. I don't think we have a reason to route display port to an SSD, so thunderbolt makes no sense. Was this sarcasm that I missed?

"At some point the hard drive should be replaced by a PCI-express full length card that accepts NAND cards, and the user simply buys and keeps adding cards as space is required. This can already be done with current technology, no reinventing the wheel required."

What protocol are the NAND cards going to use to talk to the controller? There are many engineering limitations and complexities here. Are you going to have 18 channel controller like this new Intel card? If you populate channels one at a time, then it isn't going to perform well until you populate many of the channels. This is just like system memory; quad channel systems require 4 modules from the start to get full bandwidth. It gets very complicated unless each "NAND card" is a full pci-e card by itself. Each one being a separate pci-e card is no different from just adding more pci-e cards to your motherboard. Due to this move to pci-e, motherboard makers will probably be putting more x4 and/or x8 pci-e slots with different spacing from what is required by video cards. This will allow users to just add a few more cards to get more storage. It may be useful for small form factor systems to make a pci-e card with several m.2 slots since several different types of things (or sizes of SSDs) can be plugged into it. This isn't going to perform as well as having the whole card dedicated to being a single SSD though. I don't think you can fit 18 channels on an m.2 card at the moment.

Anyway, most consumer applications will not really benefit from this. I don't think you would see too much difference in "everyday usability" using a pci-e card vs. a fast sata 6 drive. Most consumer applications are not going to even stress this card. I suspect that sata 6 SSDs wil be around for a while. The SATA Express connector seems like a kludge though. If you actually need more performance than sata 6 (for what?), just get the pci-e card version.

jeffbui - Thursday, June 5, 2014 - link

"I long for the day when we don't just see these SSD releases limited to the enterprise and corporate client segments, but spread across all markets"Too bad Intel is all about profit margin. Having to compete on price (at low profit margins) gives them no incentive to go into the consumer space.

sethk - Saturday, June 7, 2014 - link

Hi Anand,Long time fan and love your storage and enterprise articles, including this one. One questions - what the driver situation on NVMe as far as dropping one of these into existing platforms (consumer and enterprise) and being able to boot?

Another question is regarding cabling for the non-direct PCIe interfaces like SataExpress and SFF-8639? It would be great if you could have some coverage of these topics and timing for consumer availability when you do your inevitable articles on the P3600 and P3500 which seem like great deals given the performance.

T2k - Monday, June 16, 2014 - link

How come you did not include ANY Fusion-IO card? In enterprise space they are practically cheaper than the P3700 and have far bigger sizes, for less money, consistently low latency, not to mention advanced software to match it... was it a request from Intel to leave them out?SeanJ76 - Wednesday, May 20, 2015 - link

Intel has always been the best in SSD performance, and longevity. I own 3 of the 520 series(240GB) and have never had a complaint.